How to Clone Your Voice Safely Using AI: A Complete Guide!

Cloning your own voice is no longer science fiction movie, it is now a reality! It is now possible to clone your own voice very easily using artificial intelligence, or AI. But with this advancement in technology, security and privacy are also very important. In today’s blog, we will know how you can clone your own voice using AI in a completely safe way and which tools will be best for you in this process.,

Voice cloning has moved from research labs into everyday tools like ElevenLabs, Descript’s Overdub, Resemble AI, and Respeecher, so creating a realistic replica takes minutes — and that raises safety risks you should care about. Companies like ElevenLabs offer APIs and controls, while Descript bundles Overdub into workflows.

💡

Did You Know?

Consumer tools like ElevenLabs and Descript’s Overdub can produce convincing speech from as little as 10–30 seconds of audio, making voice cloning widely accessible.

Source: ElevenLabs, Descript product pages (2023)

This review explains: how the tech works at a high level, the main risks and legal issues, how to pick safer tools, a step-by-step safe workflow, and a clear pros and cons assessment. I’ll highlight consent checks, watermarking, and data handling practices to watch for.

What you’ll learn

- High-level model basics (speech synthesis, fine-tuning).

- Tool features to prioritize (consent, watermarking, security).

- Practical workflow for safe cloning.

How to Clone Your Voice Safely Using AI walks you through settings and checks so you can experiment responsibly with confidence. The review balances practical steps with a pros and cons look so you can decide.

How Voice Cloning Works: An Accessible Overview

You get the best results when you understand the pipeline: capture, clean, train, synthesize, and polish. This section explains the technical steps in plain language so you can follow How to Clone Your Voice Safely Using AI and choose tools like Descript Overdub, ElevenLabs, or Resemble AI with realistic expectations.

▶

Data capture

Record 10–60s for basic clones (Descript Overdub, ElevenLabs); minutes needed for studio-grade results.

▶

Preprocessing

Trimming, noise reduction, and phonetic balancing—tools like iZotope RX or Audacity help.

▶

Model training / fine-tuning

Options range from SV2TTS/Coqui TTS fine-tuning to commercial few-shot systems like Resemble AI.

▶

Synthesis

Neural TTS (Tacotron 2 + WaveNet), diffusion models (Grad-TTS, DiffWave) or unit-selection affect realism and latency.

▶

Post-processing

Equalization, prosody editing, and denoising improve naturalness—Descript and Adobe Enhance Speech can assist.

▶

Quality factors

Recording fidelity, background noise, phonetic coverage, and model capacity determine output quality.

▶

Model types

Unit-selection/statistical TTS, neural TTS, encoder–decoder few-shot (SV2TTS), and diffusion-based models—trade realism vs data needs.

▶

User expectations

Expect tradeoffs: more naturalness needs more data and compute; low-latency may reduce realism.

High-level pipeline

Data capture is often the limiter: consumer tools like Descript Overdub and ElevenLabs can produce usable clones from 10–60 seconds, but studio-grade models benefit from several minutes. Preprocessing uses Audacity or iZotope RX to remove noise and ensure phonetic coverage.

Model types and realism

Unit-selection and statistical TTS are older and less flexible. Neural stacks (Tacotron 2 + WaveNet) and modern diffusion-based synths (Grad-TTS, DiffWave) deliver higher realism. Few-shot encoder–decoder systems (SV2TTS, Resemble AI) let you clone with little data but may trade off expressiveness.

What you should expect

Quality depends on microphone fidelity, background noise, phonetic variety, and model capacity. Expect tradeoffs: more naturalness usually requires more data and compute, while lower latency (real-time use) can reduce output realism. Use post-processing (Descript, Adobe Enhance Speech) to polish the final audio.

Risks, Ethical Issues, and Legal Considerations

Voice cloning can be powerful, but you face concrete harms: impersonation for fraud, targeted financial scams, misinformation/deepfakes, and unauthorized commercial use of someone’s voice. Tools such as ElevenLabs, Descript Overdub, and Respeecher lower technical barriers, so your governance choices determine whether a project is responsible or risky.

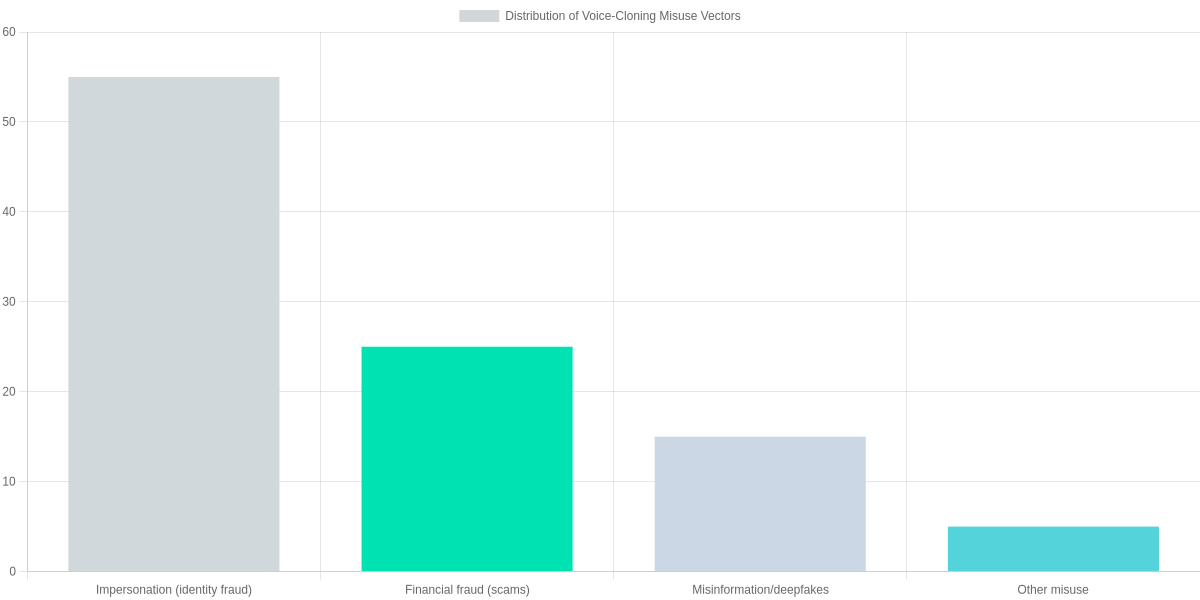

Primary risks and likelihood

- Impersonation (55%): The highest-risk vector is identity misuse — cloned voices used to impersonate family, executives, or public figures to extract money or secrets.

- Financial fraud (25%): Scams and authorized-payment social-engineering rely on believable voice replicas to bypass verbal authentication or persuade victims.

- Misinformation/deepfakes (15%): Political or reputational attacks use voice clones to create fabricated statements that spread rapidly online.

- Other misuse (5%): Harassment, copyright violation, and unwanted commercial exploitation fall into a smaller but real tail of threats.

Privacy and legal considerations

Consent laws vary by jurisdiction; some places require two-party consent to record or reproduce speech, and commercial use typically needs an explicit release. Copyright and personality/voice-rights can apply, so you should treat voice samples like copyrighted material and secure written permission before distributing clones commercially.

When you evaluate services, check platform policies: Descript Overdub and Respeecher both require documented consent for producing public or commercial clones, and ElevenLabs publishes usage policies restricting non-consensual replication.

Security risks and technical safeguards

Leakage of raw voice samples or exposed API keys is a major operational risk. Storing unencrypted WAV files in S3 buckets, committing keys to GitHub, or using weak tokens dramatically increases exposure.

Mitigations you can implement include encrypted storage (server-side and at rest), HSM-backed key management, short-lived tokens, role-based access control, mandatory sample deletion policies, and watermarking or provenance metadata embedded in generated audio.

Safety Snapshot

Primary risks include impersonation, financial fraud, and deepfakes. Prioritize consent verification, encrypted storage, and auditable logs when using tools like ElevenLabs or Descript Overdub.

- ✓ Consent verification workflows

- ✓ Encrypted storage of raw samples

- ✓ Auditable API logs and rate limits

Policy-level mitigations

Adopt consent verification, auditable logs, and provenance tagging at the platform level to make misuse traceable. Regulators should distinguish benign personal use from malicious distribution and require transparency from vendors about detection and remediation processes.

When choosing a provider, prioritize ones that mandate releases, provide API audit trails, and support content watermarking so you can defend legitimate uses and respond rapidly to abuse reports.

Choosing a Safe Voice-Cloning Tool: Feature Comparison

You’re evaluating vendors to clone a voice without exposing yourself or others to legal and security risk. Prioritize how each provider enforces consent, traces provenance, and protects audio and identity data before you commit time or budget.

Evaluation Steps

1️⃣

Verify Consent

Confirm explicit written or recorded consent is captured and stored before cloning any voice.

2️⃣

Test Watermarking & Detection

Check for inaudible watermarks or provenance markers and test vendor detection tools.

3️⃣

Review Data Retention & Deletion

Confirm retention periods, deletion-on-request, and whether training data is reused.

4️⃣

Validate Encryption & API Controls

Ensure TLS in transit, AES encryption at rest, and scoped API keys with access controls.

5️⃣

Confirm Compliance Docs

Look for GDPR/CCPA docs, audit logs, and enterprise SLAs before contracting.

What to compare — quick checklist

- Consent verification: look for explicit, auditable consent capture and identity checks.

- Watermarking & detectability: prefer vendors offering forensic watermarking or provenance markers.

- Data retention & deletion: require deletion-on-request and clear retention windows; avoid vendors that reuse samples without opt-out.

- Encryption & API controls: TLS in transit, AES-equivalent at rest, scoped API keys and role-based access.

- Model ownership & licensing: confirm you retain commercial rights to cloned voices and check reuse clauses.

- Customer support & SLAs: enterprise contracts should include audit logs and breach notification timelines.

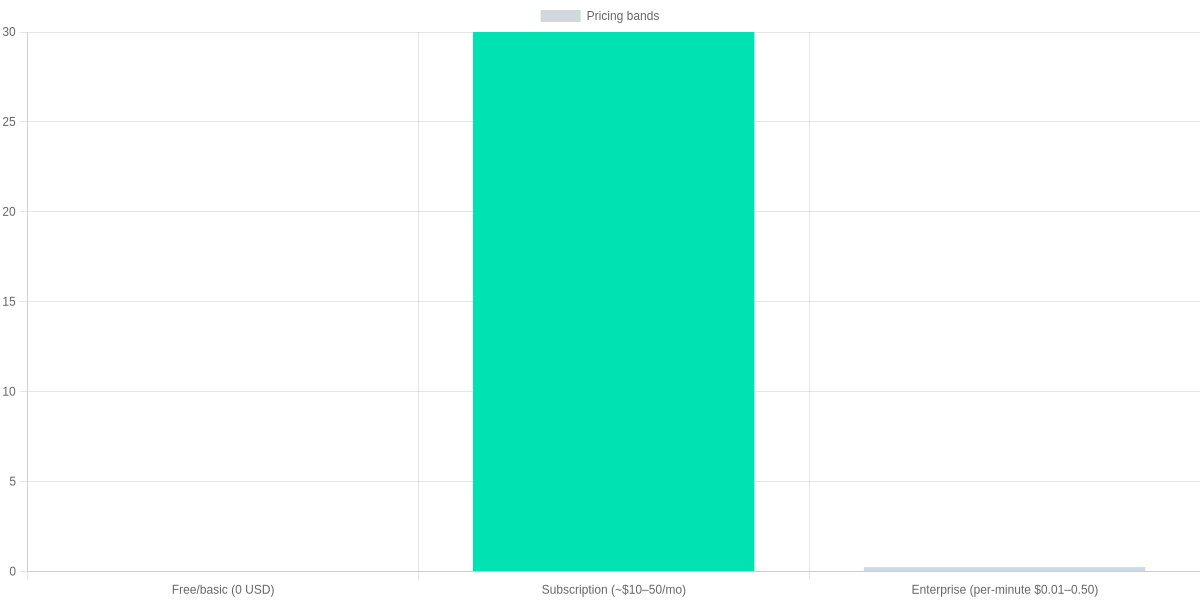

Budget guideline: expect Free/basic tiers at $0, subscriptions roughly $10–$50/month, and enterprise or per-minute costs often quoted between $0.01–$0.50 per generated minute. Use these bands to estimate monthly usage costs for your project.

| Feature | ElevenLabs | Resemble.ai | Descript Overdub |

|---|---|---|---|

| Consent verification | Explicit consent flow; user-submitted voice sample; public-voice checks | Built-in consent capture and legal agreements; enterprise consent APIs | Requires written consent and identity verification; Overdub training requires authorization |

| Watermarking / detectability | No universal inaudible watermark; provides provenance and usage logs | Offers detectable watermarking and forensic detection tools | Focuses on provenance & usage labels; no standard inaudible watermark |

| Data retention policies | User controls and deletion requests; paid plans add retention settings | Configurable retention; enterprise contracts include data segregation | Stores project audio; deletion on request; GDPR tools available |

| Encryption (at-rest/in-transit) | TLS in transit; AES-like encryption at rest (enterprise-grade) | TLS in transit; encryption at rest and enterprise security options | TLS in transit; encryption at rest; SSO and enterprise security for paid tiers |

| Model ownership & licensing | Users retain rights to their cloned voices; platform licensing for engine use | Customer retains voice asset ownership; commercial licensing options | Users retain rights to Overdub voices within Descript terms; commercial use permitted under license |

| Customer support & SLAs | Email/community support; priority support on paid tiers | Enterprise SLAs with dedicated support; paid plans include higher priority | Help center + email; Pro/Enterprise plans include priority support |

| Pricing (typical) | Free tier; paid plans roughly $5–$30+/mo; pay-as-you-go generation | Custom/business pricing; per-minute enterprise pricing (~$0.01–$0.20/min) | Free tier; Creator/Pro ~$12–$24/mo; enterprise & per-minute options |

Security checklist & evaluation method

- Minimum controls: end-to-end encryption, deletion-on-request, explicit consent flows, and scoped API keys.

- Regulatory readiness: require GDPR/CCPA statements and audit logs for any vendor you shortlist.

- Score vendors 0–5 on Privacy, Consent Enforcement, Watermark Capability, Price, and Support; use the side-by-side table above to total scores and decide.

A Step-by-Step Safe Workflow to Clone Your Voice

Safe Voice Cloning Workflow

Plan Purpose & Scope

Define use cases (podcast, internal demo, narration) and limit distribution.

Obtain Explicit Consent

Record signed/recorded consent detailing rights, duration, and allowed uses (use DocuSign or recorded video).

Minimize Data

Provide the smallest clean dataset that meets quality (30–60s for basic needs).

Choose Provider & Contracts

Pick Descript Overdub, ElevenLabs, or Resemble.ai with deletion and retention clauses.

Protect the Artifact

Embed detectable watermarks, label outputs as synthetic, and restrict distribution channels.

Operational Security

Rotate API keys, use AWS KMS/Azure Key Vault, monitor logs with CloudTrail/Datadog.

Legal & Post-Release Checks

Keep consent records, implement takedown plans, and be ready to revoke access.

You should follow an auditable workflow when cloning a voice; this review prescribes concrete controls you can implement today. Use platform features in Descript Overdub, ElevenLabs, or Resemble.ai but bind them with contracts and operations.

Practical Steps

- Plan purpose and scope: document why you need a clone (internal demo, podcast, narration), where it will live, and which channels are permitted. Narrowing scope limits downstream risk.

- Obtain explicit consent: capture a signed DocuSign agreement or a recorded video statement that lists rights granted, duration, and allowed use cases. Archive the artifact with timestamps.

- Minimize data: supply only the smallest clean dataset that meets quality—30–60 seconds may suffice for basic models. Transcribe with OpenAI Whisper or Descript to verify content before upload.

- Choose provider and contractual protections: insist on deletion clauses, retention limits, SOC2/ISO27001 attestations, and encryption-in-transit and at-rest.

- Protect the artifact: embed detectable watermarks, mark outputs as synthetic in metadata and captions, and restrict hosting to gated feeds or private buckets.

- Operational security: rotate API keys, store secrets in AWS KMS or HashiCorp Vault, enable MFA and least privilege, and monitor logs with CloudTrail or Datadog.

- Legal and post-release checks: retain consent records, prepare revocation/takedown templates, and test the takedown plan with provider support channels.

Pros and Cons Review: Is Voice Cloning Right for You?

You gain concrete benefits: it saves time on repetitive voiceover tasks, enables accessibility (for visually impaired users), maintains consistent branding across content, and lets you iterate rapidly during production.

Drawbacks are significant: there’s potential for misuse, possible legal exposure when consent is unclear, loss of control over your vocal likeness, and varied output quality that can sound uncanny if misused.

A quantified tradeoff snapshot favors benefits (60%) over risks (40%), but that advantage depends on the safeguards you apply.

Adopt clear retention policies, versioning, access controls, and audit trails to reduce misuse and prove consent. Monitor usage actively.

When to proceed

- Clearly consented projects with contractual safeguards and watermarking.

When to avoid

- High‑stakes authentication, legal‑sensitive contexts, or any situation where you lack control over distribution.

Quick recommendation: if you follow the safe workflow, choose vendors with strong consent enforcement (Descript Overdub, Resemble.ai, ElevenLabs), and visibly mark generated outputs, you can capture most benefits while mitigating common downsides.

Vendor snapshot: workflow & controls

Descript Overdub

Editor-first voice cloning with consent enforcement and easy integration into podcast/video workflows.

- • Requires ~10+ minutes of clear training audio

- • Built-in identity verification and team controls

- • Great for iterative editing and branding consistency

ElevenLabs

High-fidelity TTS and cloning focused on realism and rapid iteration.

- • Clones from short samples (tens of seconds) for prototypes

- • Robust API for content generation

- • Strong output quality but watch for uncanny artifacts

Tool comparison

| Feature | Descript Overdub | Resemble.ai | ElevenLabs |

|---|---|---|---|

| Minimum training audio | ~10+ minutes | ~1–10 minutes | ~20–60 seconds |

| Consent & verification | Identity verification; explicit consent required | Consent tools and enterprise governance | User guidelines; content moderation and policies |

| Watermarking / detection | Limited native watermarking; editorial controls | Enterprise watermarking options | Generated-audio fingerprinting / detection tools |

| API access | Available (team/enterprise) | Yes, low-latency API | Yes, full-featured API |

Frequently Asked Questions

You’ll find the legal and technical boundaries vary widely when cloning voices. Consent is the cornerstone: get a written release before commercial use and check state biometric or publicity laws. Vendors like Microsoft Custom Neural Voice, Descript Overdub, ElevenLabs, and Respeecher enforce consent policies and often require signed approvals.

Audio needs vary: 10–60 seconds can yield a rough mimic, but for studio-grade results you should supply several minutes of clean, mono recording. Descript’s Overdub, Respeecher, and Microsoft’s documentation all note improved fidelity with longer, high-quality samples. If you plan broad distribution, choose providers with watermarking or provenance tools and enterprise audit logs.

Pros and Cons

- Pro: Clear consent workflows at Microsoft and Descript reduce legal risk.

- Pro: ElevenLabs and Respeecher deliver realistic timbre with modest samples.

- Con: High-quality cloning needs minutes of studio audio, not social clips.

- Con: Detection tools vary; Pindrop or forensic analysis may be required.

FAQ Accordion

Is cloning my voice legal?

▼

How much audio do I need for a reliable clone?

▼

Can cloned voices be detected or watermarked?

▼

How can I protect myself from unauthorized cloning?

▼

Are there trusted providers that verify consent and provide audit trails?

▼

Conclusion

How to Clone Your Voice Safely Using AI balances technical capability with ethical responsibility. Prioritize explicit consent, pick vendors with privacy controls (ElevenLabs, Resemble AI, Descript Overdub), secure models and files with end-to-end encryption, and apply forensic watermarking to generated audio.

🎯 Key Takeaways

- → Prioritize explicit consent and document permissions before cloning any voice.

- → Choose vendors like ElevenLabs or Resemble AI with strong privacy and deletion policies.

- → Secure models and audio files with end-to-end encryption and access controls; use watermarking to prevent misuse.

- → Run a risk assessment, collect explicit consent, and start with limited, labeled tests before wider deployment.

Pros

- Clear consent workflows; manageable access controls.

- Vendor tools (ElevenLabs, Resemble AI, Descript Overdub) simplify fine-tuning and deletion.

- Watermarking and encryption reduce misuse risk.

Cons

- Legal and reputational exposure if consent is mishandled.

- Model leakage or unauthorized reuse remains a threat.

- Quality vs. safety trade-offs can limit realism.

Next steps: run a risk assessment for your intended use, collect explicit consent, choose a vendor with strong privacy features, and start with limited, clearly labeled tests.

TL;DR: Voice cloning is now widely accessible through consumer tools like ElevenLabs, Descript Overdub, and Resemble AI—capable of producing convincing speech from as little as 10–60 seconds of audio—yet this ease raises serious safety, legal, and consent concerns. This review outlines how the technology works, what features to prioritize (consent checks, watermarking, secure data handling), and gives a step-by-step safe workflow plus pros and cons so you can experiment responsibly.

Voice cloning technology is changing the way we create content. But remember, only the correct and safe use of the technology will bring you long-term success. Always use trusted tools and ensure the security of your personal data when cloning voices with AI. Hopefully, this guide will help you get started with voice cloning safely.

Ready to Create Your AI Voice? 🎙️✨

If you found this guide helpful or have any questions about the safety of voice cloning, please leave a comment below! 💬🔒 Stay tuned to SearchAIFinder for more expert reviews and tutorials on the latest AI innovations. 🚀🤖🌐

I ‘m Md. Osman Goni > Founder of SearchAIFinder and an AI content specialist. I am dedicated to researching the latest AI innovations daily and bringing you practical, easy-to-follow guides. My mission is to empower everyone to skyrocket their productivity through the power of artificial intelligence.”

💬 We’d Love to Hear From You!

Which of these AI tools are you excited to try first? Let us know in the comments below!