Debunking AI Myths 2026: What’s Reality vs. Hype Today

Debunking AI Myths 2026: What’s Reality vs. Hype Today In 2026, we are living in an era where artificial intelligence or AI is an integral part of our lives. But with this triumph of technology, confusion and baseless rumors are increasing. Many people think that AI will soon take over the place of humans, while others think that it may become a super-human being in the near future. But there is a huge difference between reality and fiction. In today’s blog, we will break some popular myths or misconceptions about AI and find out in the light of information what the real capabilities and limitations of this technology are today.

You’ve seen headlines spinning doomsday or miracle stories about AI. Debunking Common Myths About AI shows how hype and fear diverge from practical reality.

💡

Did You Know?

Most deployed AI systems today are narrow—used for fraud detection, recommendation engines, OCR, or language assistance—not general intelligence.

Source: Industry surveys (2022–2024)

You’ll get data-driven rebuttals to top myths and practical guidance, with evidence from OpenAI analyses, Hugging Face benchmarks, and adoption trends. The review evaluates tools such as ChatGPT, Google Bard, Azure Cognitive Services, TensorFlow, and Hugging Face models to separate marketing from measurable impact.

Pros and Cons

- Pros: Productivity gains (ChatGPT, Azure Cognitive Services), faster prototyping.

- Pros: Better insights from TensorFlow and Hugging Face models.

- Cons: Risk of bias, privacy issues, and vendor lock-in.

- Cons: Overhype can lead to wasted spend and unrealistic expectations.

You’ll walk away with practical checks for vendors and clear pros and cons to weigh before adopting AI. No single tool is magic—context matters.

Top Myths About AI Explained

As you read Debunking Common Myths About AI, this review cuts through hype to show what modern systems actually do. You should expect powerful pattern-matching systems, not humans in silicon.

Quick Myth Clarifications

▶

Myth: AI = Human Intelligence

AI models mimic patterns; they lack common-sense reasoning, emotional understanding, and self-awareness.

▶

Myth: AI Will Replace All Jobs Overnight

Automation shifts tasks gradually—AI augments many roles and redirects work rather than instantly eliminating roles.

▶

Myth: AI Is Objective and Neutral

Models reflect training data biases and developer choices; objectivity is not guaranteed.

▶

Myth: AI Is Already Conscious or Fully Autonomous

Current systems execute learned patterns and need human oversight for goals, safety, and legal responsibility.

Myth: AI is the same as human intelligence. OpenAI’s GPT‑4 generates fluent text and can simulate reasoning, but it lacks genuine intentions and self-awareness. You should treat responses as statistically likely continuations, not human judgment.

Myth: AI will replace all jobs overnight. Tools like GitHub Copilot and Microsoft 365 Copilot augment productivity for developers and knowledge workers; automation redefines tasks over years. Expect role transformation and new coordination work rather than instant mass unemployment.

Myth: AI is inherently objective. Models trained on web-scale corpora reflect historical biases; Google Gemini and Anthropic Claude include guardrails, but mitigation needs active curation and human review. In sensitive applications you must audit outputs.

Myth: AI is already conscious or autonomous. Current Claude and GPT deployments execute human-specified goals and require oversight for safety, legal accountability, and correctness.

Top Myths — Product Comparison

| Feature | OpenAI ChatGPT (GPT-4) | Google Gemini (Gemini Advanced) | Anthropic Claude (Claude 2/3) |

|---|---|---|---|

| Primary focus / best use-case | General-purpose conversational AI, coding, creative writing | Search and Workspace integration, multi-modal assistance | Safety-centered assistant for enterprise and research |

| Customization & fine-tuning | Custom GPTs and API-based fine-tuning options via OpenAI | Google Cloud Vertex AI for model deployment and customization | Enterprise Claude offering customization APIs and guardrails |

| Safety & alignment approach | Safety layers, content policies, RLHF; transparency initiatives | Google uses PaLM-family safety work, alignment research; integrated safeguards | Constitutional AI principles, red-teaming, emphasis on conservative outputs |

| Enterprise access & integrations | API, ChatGPT Plus/Enterprise, plugins and Microsoft integrations | Available via Google Cloud, Bard integrations in Workspace and Search | Enterprise offerings, Claude Studio, partnerships with businesses |

| Typical availability / pricing | Subscription tiers (ChatGPT Plus), API pay-as-you-go | Bard free basic access; advanced features via Google Cloud pricing | Enterprise pricing; developer access with usage-based plans |

Pros

- You gain clarity: separating tool capabilities (GPT‑4, Gemini, Claude) from sci‑fi expectations.

- You can evaluate risk: know where bias, opacity, and oversight are required.

- You can adopt strategically: use Copilot-style tools to augment tasks instead of expecting full automation.

Cons

- You must invest in oversight: audits, human review, and domain-specific testing.

- Some vendor claims are marketing-heavy and need verification for your use-case.

- Bias and safety gaps can persist despite guardrails, requiring ongoing mitigation.

Myths vs Reality — Data and Trends

Myth vs Reality Snapshot

Public fears often overstate AI’s current reach. Adoption is rising, but accuracy and impact vary by task and industry.

- ✓ Public belief: jobs replaced

- ✓ Enterprise adoption: selective, sector-specific

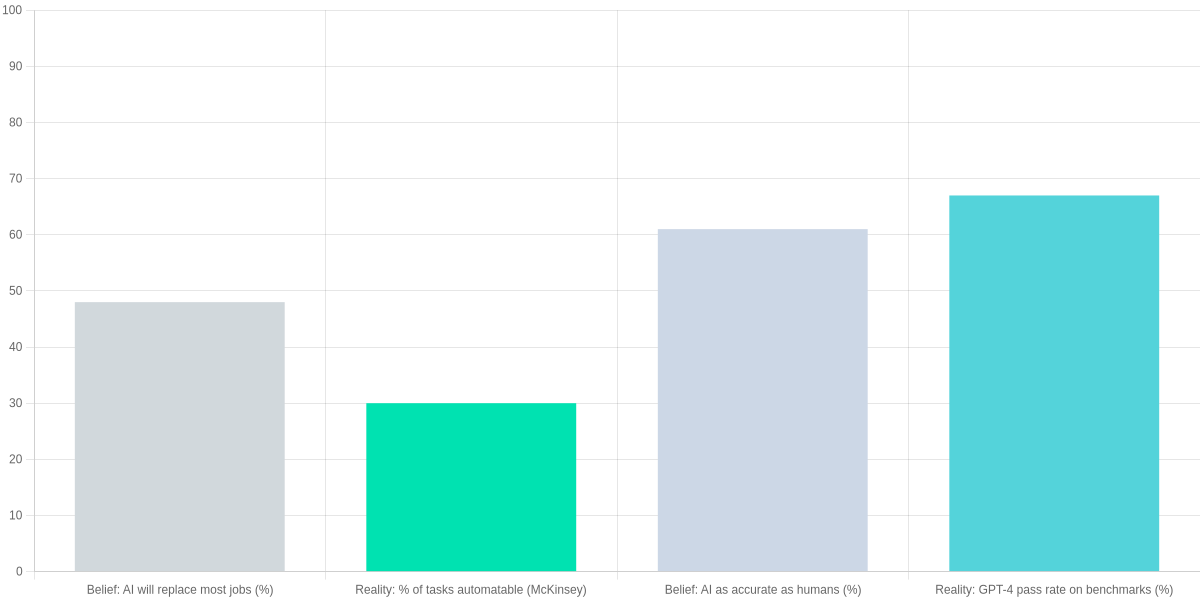

You often see headlines claiming that “AI will take everyone’s job” or that systems like OpenAI’s GPT-4 or Google Bard are already flawless replacements for human experts. Surveys show a large share of the public endorses these extreme views: roughly half of respondents in several recent polls say AI will replace most jobs. That perception contrasts with measured estimates of what AI can actually automate today.

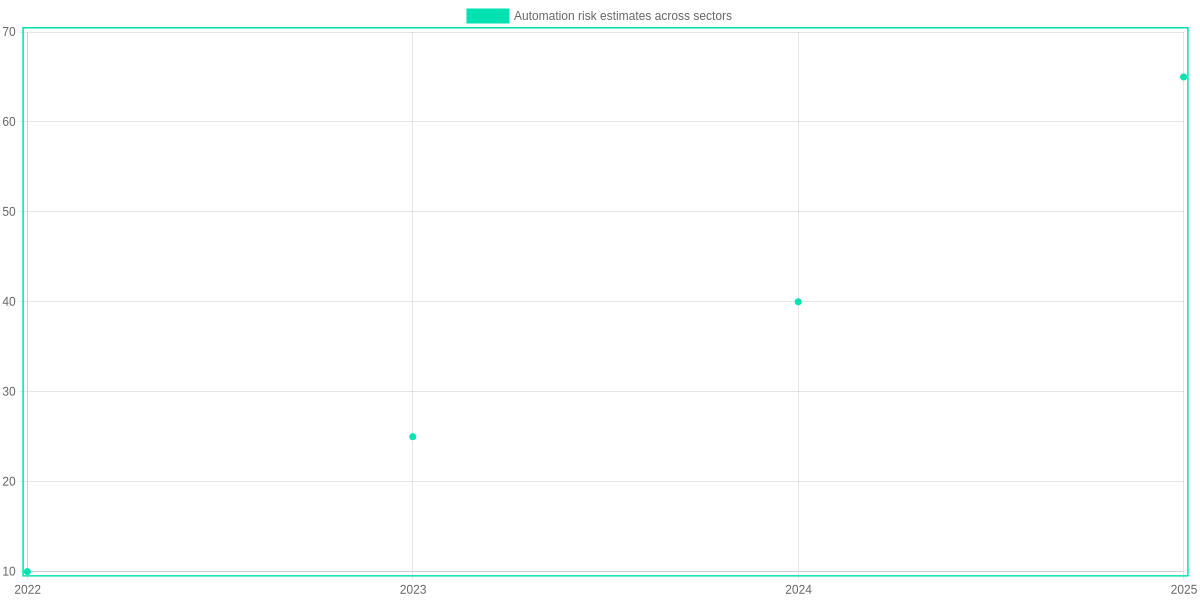

On adoption: firms like Microsoft (Azure AI), AWS (SageMaker), and Google Cloud report broad interest, but McKinsey-style industry surveys show that about 30–56% of companies have integrated AI into at least one function, and actual deployment is concentrated in areas like marketing, fraud detection, and predictive maintenance. Healthcare and finance show higher pilot activity, but full production use remains selective.

On accuracy: benchmark improvements are real but task-specific. For example, vision models (ResNet, ViT families) and language models (GPT-4 family) have driven big gains on ImageNet and MMLU-style tests. GPT-4-level models now pass many standardized benchmarks at competitive rates, but they still err on domain-specific reasoning, long-form planning, and adversarial cases where specialized tools like IBM Watson or domain-specific models outperform generalist LLMs.

You should treat headlines comparing “human-level” performance with caution. Benchmarks offer useful snapshots — GPT-4 may score in the high range on certain exams, while ImageNet top-1 accuracy is close to, but not uniformly exceeding, human-level performance. Real-world systems still require human oversight, integration engineering, and dataset tailoring (for example, fine-tuning in Hugging Face libraries or customized pipelines in Amazon Bedrock).

Here is the “AI vs. Human: Reality Check 2026” table in English, designed to be clean, professional, and easy for your readers to digest:

AI vs. Human: Reality Check 2026

| Feature | Artificial Intelligence (AI) | Human Intelligence | The Reality |

| Decision Making | Processes massive data to provide fast outputs. | Considers emotions, ethics, and context. | AI provides the data; humans make the final call. |

| Creativity | Generates new content based on existing patterns. | Capable of true original and abstract thinking. | AI is a powerful tool, but the “spark” is human. |

| Empathy | Simulates emotional responses via algorithms. | Experiences genuine feelings and compassion. | AI has no consciousness; it only mimics empathy. |

| Learning | Learns from trillions of data points (Training). | Learns from limited experience and environment. | AI needs data; humans need intuition and life. |

| Accuracy | May produce “Hallucinations” or false info. | Uses common sense to filter out logical errors. | In 2026, AI outputs still require human verification. |

Pros and Cons

- Pros: Measured adoption shows targeted, high-impact wins in fraud detection, recommender systems, and predictive maintenance using tools like Azure AI and AWS SageMaker.

- Cons: Public perception overestimates immediacy; off-the-shelf models such as vanilla ChatGPT or Midjourney can produce confident but incorrect outputs and need guardrails.

AI and the Workforce: Jobs, Automation, and You

You need clarity on which roles are at real risk and which will be enhanced. This section evaluates sector-level susceptibility, contrasts augmentation with replacement using real cases, and outlines realistic reskilling timelines and actions you can take now.

Sector breakdown: most and least susceptible

Automation risk varies by task composition. Jobs heavy in routine data entry, basic accounting, or repetitive manufacturing steps face higher automation likelihood. Roles requiring complex interpersonal judgment or non-routine creativity remain more resilient.

- High susceptibility: data-entry clerks, basic bookkeeping, assembly-line tasks, and some call-center roles (UiPath and Blue Prism RPA tools target these).

- Moderate susceptibility: radiology triage, basic legal research, and customer support where tools like Google Cloud’s Contact Center AI or Amazon Connect supplement agents.

- Low susceptibility: senior management, primary school teaching, creative direction, and negotiated sales that require nuanced social reasoning.

Augmentation vs. replacement — real-world case studies

Augmentation preserves human oversight while improving throughput. Software developers using GitHub Copilot report faster prototyping and fewer boilerplate errors, not wholesale staff cuts.

In healthcare, IDx-DR and FDA-cleared imaging tools assist ophthalmologists by flagging diabetic retinopathy, speeding triage but leaving diagnosis and treatment decisions to clinicians. Conversely, basic invoice processing has seen replacement via SAP Concur automation and robotic process automation, removing many manual steps.

Reskilling and transition timelines

Expect variable timelines. For incremental task automation, 6–12 months of targeted upskilling (LinkedIn Learning, Coursera) can make you immediately more productive. Transitioning from a high-risk role to a new career path commonly takes 1–3 years with focused coursework and project experience.

Practical steps: audit your role with ONET or LinkedIn Skills Insights; pilot augmentation tools (Microsoft Copilot, ChatGPT) to identify complementary tasks; enroll in focused credentials (Coursera Specializations, Udacity Nanodegrees, AWS Training) and build a portfolio of applied projects.

Actionable Steps to Adapt

🔍

Assess Automation Risk

Identify routine vs. non-routine tasks using ONET, LinkedIn Skills Insights, and role audits.

🤝

Augment, Don’t Replace

Adopt GitHub Copilot, ChatGPT, or Microsoft Copilot to automate repetition while keeping human oversight.

📚

Reskill Strategically

Focus on data literacy, prompt engineering, and domain AI skills via Coursera, Udacity, and AWS Training.

🗓️

Plan Transition Timelines

Aim for 1–3 year reskilling windows for many roles; use hands-on projects and mentorship to accelerate.

Pros and Cons (practical review)

- Pros: Increased productivity with tools like GitHub Copilot and Microsoft Copilot; faster triage in medicine with IDx-DR; scalable workflows via UiPath.

- Cons: Short-term displacement in high-routine roles; uneven access to quality reskilling (cost of Udacity or paid Coursera tracks); potential overreliance on models like ChatGPT without human checks.

Ethics, Bias, and Safety: Separating Fear from Fact

You should expect bias to come from data, labels, and incentives. ImageNet and some OpenAI training sets contain skews; GPT-4, Claude, and Llama have shown disparate performance across groups. You can test models with IBM AI Fairness 360 and Google’s What-If Tool to spot disparities.

Where bias originates

- Data: historical imbalance in ImageNet, web scrapes that overrepresent certain voices.

- Labels: inconsistent annotation causes label noise and downstream errors.

- Incentives: product metrics can reward shortcut learning and ignore fairness.

Practical Steps to Reduce Bias

Audit Data

Check training sets (ImageNet, OpenAI datasets) for skew and label errors.

Test Models

Run fairness tests on GPT-4, Claude, or Llama; measure disparate impact.

Fix Incentives

Align metrics and team incentives to reduce shortcut learning.

Adopt Standards

Implement ISO/IEC 42001-style governance and use NIST AI RMF guidance.

Regulation and industry: NIST AI RMF, ISO/IEC 42001-like governance, and the EU AI Act push transparency, but gaps remain in enforcement and supply-chain audits. Companies like OpenAI and Anthropic publish red-team reports and bug-bounty programs, yet oversight lags for smaller vendors. Funding and cross-border compliance remain unresolved.

Real incidents are typically dataset errors or misuse — Microsoft Tay and biased outputs from Amazon Rekognition produced tangible harms. Apocalyptic takeover narratives get headlines but rarely map to operational risks you should prioritize. Focus on audits and governance now regularly.

Pros and Cons

- Pros: Practical audits, tooling (ModelCard, What-If Tool), and frameworks reduce real harms.

- Cons: Sensationalized scenarios overshadow common incidents; underfunded audits and fragmented standards persist.

How to Evaluate AI Claims and Use AI Responsibly

You should treat vendor claims skeptically and verify provenance, benchmarks, data sources, and interpretability. Demand model cards, red‑team results, and third‑party evaluations before deploying large models.

Vendor Claims vs. Verification

OpenAI GPT-4 Claims

OpenAI positions GPT-4 as a high-performing, generally safe large language model with API moderation and usage policies.

- • Provenance: technical reports and blog posts

- • Benchmarks: reported strong results on reasoning and MMLU-style tests

- • Data disclosure: high-level descriptions only

Verification & Responsible Use

Concrete practices to evaluate claims and adopt AI responsibly across vendors.

- • Request model cards and red‑team reports

- • Insist on third‑party benchmark results (e.g., MMLU, TruthfulQA)

- • Add human-in-the-loop, output verification, and monitoring pipelines

Vendor claims vs verifiable evidence

| Feature | OpenAI GPT-4 | Google Gemini | Anthropic Claude 2 |

|---|---|---|---|

| Model card / Documentation | Published technical report and safety blog; model card partially public | Research papers and product docs; limited model card details | Research papers and safety docs; limited low-level details |

| Training-data transparency | High-level descriptions (web, licensed, human‑labeled); no raw data release | Described at corpus level (web, books, code); no raw datasets published | High-level descriptions; focus on filtered/training hygiene; datasets not released |

| Third‑party benchmarks | Competitive across MMLU, reasoning, code (varies by eval) | Strong on reasoning and multimodal benchmarks in Google benchmarks | Competitive on safety-focused evaluations and general benchmarks |

| Safety & moderation | API moderation endpoint, system message controls, use guidelines | Integrated content filters and enterprise controls | Constitutional AI approach, extensive red‑team research |

| Explainability | Limited native explainability; use external tools for attribution | Limited; Google provides analysis tooling in Vertex AI | Partial; emphasis on safer outputs over transparency |

Practical checklist

- Provenance: ask for model cards, training timelines, and provenance of pretraining and fine-tuning data.

- Benchmarks: require independent evaluations (MMLU, TruthfulQA, HELM) and reproduce vendor claims.

- Data sources: insist on descriptions of licensed, public, and synthetic datasets and opt-out policies.

- Interpretability: request attribution tools, confidence estimates, and failure cases.

Questions to ask

- Can you provide a model card, red‑team report, and third‑party benchmark artifacts?

- How do you monitor drift, handle incidents, and log outputs for audits?

- What are the remediation steps for biased or harmful outputs?

Everyday practices

- Add human-in-the-loop, sample outputs for verification, run bias audits, maintain consent and privacy controls, set up continuous monitoring.

Pros and Cons

- Pros: faster decisions, scalable automation, improved insights.

- Cons: opaque training data, residual bias, regulatory risk if uncontrolled.

Include contractual requirements for logging, incident response, and data retention. Train your product and legal teams on model limitations and create escalation paths for unexpected model behavior. Measure outcomes, not just vendor claims, regularly.

Frequently Asked Questions

You get concise answers to common concerns about AI, with specific product examples and policy references. Review-style responses highlight practical trade-offs.

Common AI FAQs

Will AI take my job or just change it?

▼

Is AI conscious or sentient?

▼

Can AI be trusted to make unbiased decisions?

▼

How accurate are AI systems and how should you verify them?

▼

What regulations exist to safeguard people from AI harms?

▼

How can you tell whether content or decisions were produced by AI?

▼

Pros: tools like GPT-4 and Microsoft Copilot boost productivity and creativity. Cons: risks include bias, hallucinations, and displacement in routine jobs without retraining programs.

Prefer vendors with model cards and audit trails.

Conclusion

As a review Debunking Common Myths About AI, you saw claims like “AI is infallible,” “will replace experts,” or “has no explainability” fail against evidence from GPT-4, Anthropic Claude, and Meta’s Llama 2. They’re powerful but fallible—validate outputs and add guardrails.

🎯 Key Takeaways

- → Myths vs. reality: GPT-4, Claude, and Llama 2 are powerful but limited—their outputs require verification.

- → Evaluate platforms by accuracy, explainability, safety, and cost: use OpenAI, Anthropic, Google Cloud, Hugging Face for comparisons.

- → First moves: run a small pilot, use datasets to benchmark, enable guardrails (human review, model explainability) and consult resources like arXiv, OpenAI docs, and Hugging Face tutorials.

Pros

- OpenAI and Hugging Face enable fast prototyping.

- Google Cloud AI provides enterprise integrations.

Cons

- Hallucinations and bias (GPT-4, Claude).

- Costs, latency, and privacy trade-offs.

Next: pilot with OpenAI or Hugging Face, benchmark on held-out data, add human review and model explainability, and read OpenAI docs and Hugging Face tutorials to evaluate tools and scale responsibly.

TL;DR: This post debunks common AI myths, showing most deployed systems are narrow pattern‑matching tools (fraud detection, recommendations, OCR, language assistants) rather than general intelligence, using industry surveys and benchmarks (OpenAI, Hugging Face) to separate hype from measurable impact. It summarizes practical pros (productivity, faster prototyping, better insights) and cons (bias, privacy, vendor lock‑in, wasted spend) and gives vendor checks and guidance to adopt AI responsibly with human oversight.

Ultimately, AI is not a magic wand, nor is it our enemy; rather, it is a powerful extension of our intelligence. When we examine the myths with the touchstone of reason, it becomes clear that the future of AI depends on the proper control and ethical use of humans. In this rapidly changing world of 2026, instead of being terrified by technology, we should learn to understand it correctly. If we shake off misconceptions, accept reality and accept AI as a ally, then only we will be able to build a prosperous and technology-dependent future.

💡 Key Insight: AI Reality Check

“AI is not a magic wand that transforms everything overnight; it is a powerful co-pilot. In 2026, success doesn’t come from letting AI lead, but from humans learning to steer it with ethics and intent.”

I ‘m Md. Osman Goni > Founder of SearchAIFinder and an AI content specialist. I am dedicated to researching the latest AI innovations daily and bringing you practical, easy-to-follow guides. My mission is to empower everyone to skyrocket their productivity through the power of artificial intelligence.”

💬 We’d Love to Hear From You!

Which of these AI tools are you excited to try first? Let us know in the comments below!