AI Research Tools for Academic Excellence Review 2026

The landscape of academic research has undergone a seismic shift in 2026. Gone are the days of spending countless hours manually sorting through stacks of journals or struggling with complex citation formats. Today, Artificial Intelligence has moved beyond being a mere luxury—it is now a fundamental partner for students, professors, and professional researchers.

In this comprehensive review, we dive deep into the Best AI Research Tools of 2026 that are specifically designed to enhance academic excellence. Whether you are looking for tools that can summarize thousands of pages in seconds, verify the credibility of your sources, or automate your data analysis, this guide will help you build the ultimate AI-powered research toolkit. Let’s explore how these technologies are making the pursuit of knowledge more efficient and accurate than ever before.

You’re navigating The Impact of AI on the US Education System and need a concise, critical review. I assess real classroom tools—ChatGPT, Khanmigo, Gradescope, Google Classroom—and how they reshape lesson planning, assessment, and student support. Expect clear verdicts on effectiveness and classroom fit.

💡

Did You Know?

Over 60% of U.S. K–12 teachers report using AI tools like ChatGPT, Khanmigo, and Gradescope for lesson planning or assessment support.

Source: 2024 EdTech Teacher Survey

You’ll learn current adoption trends, measurable benefits for students and educators, equity and privacy risks, and policy implications. Practical recommendations for administrators and teachers cover implementation, teacher training with Microsoft Copilot and Turnitin’s detection, and quick wins you can try tomorrow. Pros and cons are weighed so you can decide whether to pilot or pause adoption.

Current landscape and adoption

Nationwide snapshot

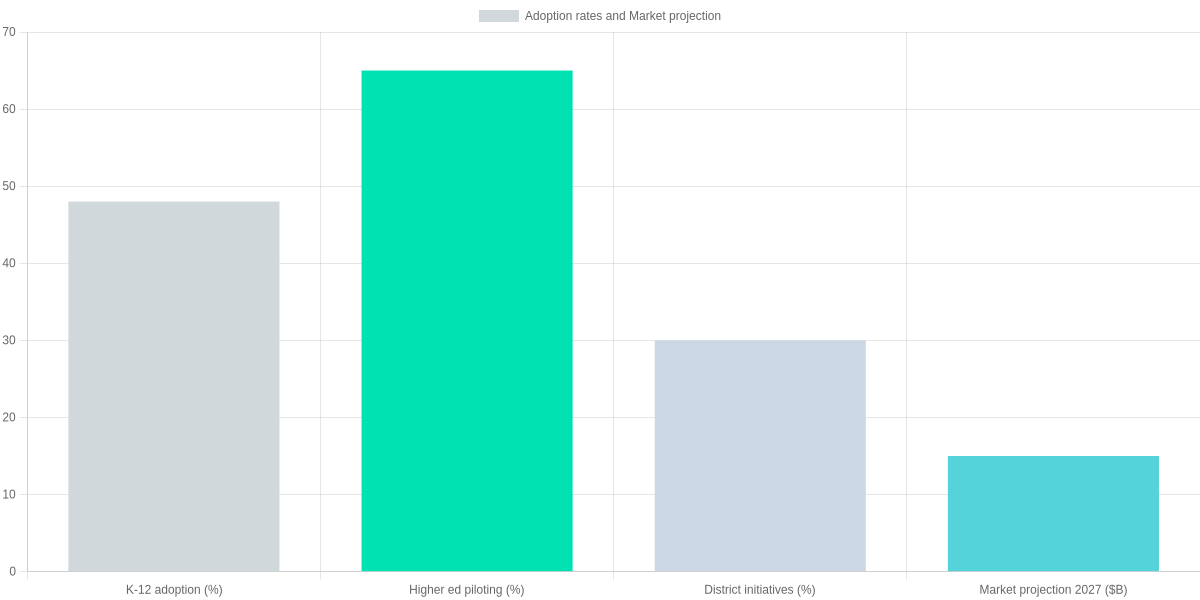

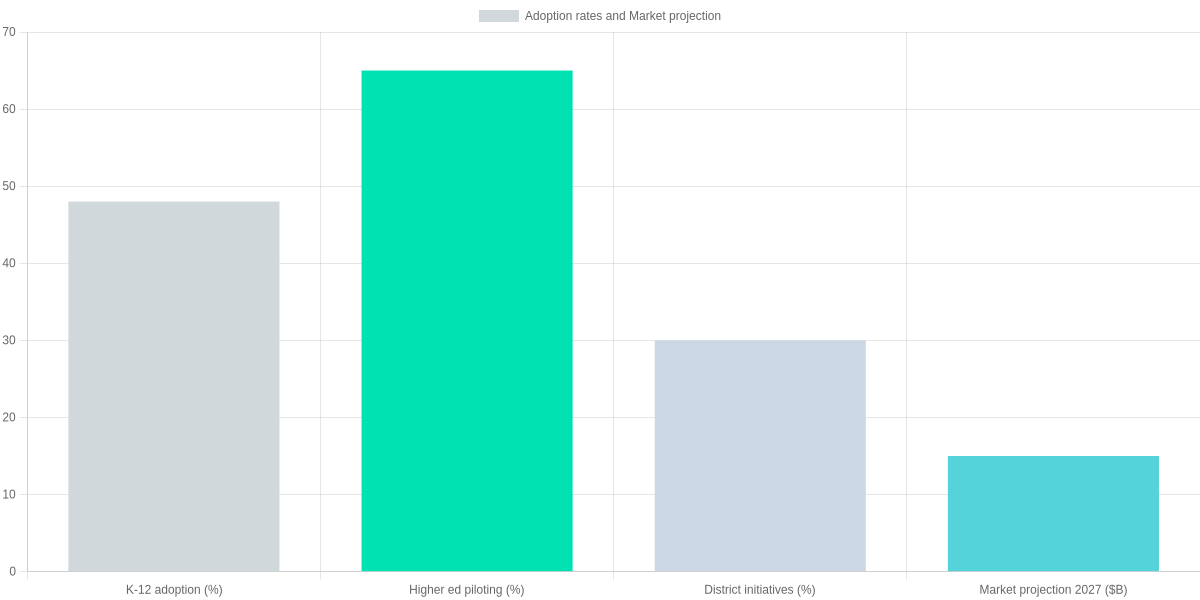

You’ll find adoption uneven but accelerating across K-12 and higher education. Recent surveys show that about 48% of K-12 schools use AI tools, while roughly 65% of colleges and universities report active pilots.

District-level initiatives remain more limited: around 30% of districts report formal AI programs or pilot approvals. You should expect pockets of maturity—adaptive platforms such as Knewton and DreamBox, and virtual tutors (Duolingo’s AI features) lead classroom-facing use cases.

Administrative adoption is notable in scheduling, admissions, and automated grading tools offered by vendors like Coursera and Khan Academy integrations. Content generation and plagiarism detection are active areas, with both promise and controversy.

Quick adoption highlights

▶

Adoption snapshot

Approximately 48% of K-12 districts report using some AI tools; ~65% of higher‑education institutions report active pilots.

▶

Market size and trajectory

US AI-in-education market in the 2020s is in the low billions; projections commonly cite $10–$20B by 2027.

▶

Common use cases

Automated grading, adaptive platforms (Knewton, DreamBox), virtual tutors (Duolingo’s AI features), content generation and admin automation.

▶

Short-term drivers

Pandemic-accelerated edtech adoption, vendor pushes from Coursera and Khan Academy, and teacher experimentation.

▶

Barriers

Budget constraints, uneven infrastructure, and policy uncertainty at the district and state levels.

Market context and vendor landscape

Market estimates vary: the US AI-in-education market was described in the low billions through the early 2020s, with widely cited projections in the $10–$20 billion range by 2027. For planning, you’ll want to use a conservative mid-point—roughly $15 billion—to model procurement and total cost of ownership.

Adoption rates and market projection for AI in US education

Short-term drivers are clear: the pandemic accelerated edtech adoption, vendors pushed platform integrations, and many teachers began experimenting with ChatGPT and model-assisted lesson planning. Barriers remain: constrained district budgets, uneven broadband and device access, and policy uncertainty at state and district levels.

As you assess tools, weigh common use cases—automated grading, adaptive learning, virtual tutoring, content generation, and administrative automation—against training and equity requirements. The Impact of AI on the US Education System shows adoption is not synonymous with readiness; pilots often outnumber scaled, districtwide rollouts.

You should prioritize pilots that measure learning outcomes, not just time-on-task or engagement metrics. Expect procurement cycles to lengthen as districts demand interoperability, FERPA compliance, and clear vendor SLAs.

Vendors such as Turnitin with Gradescope, Microsoft Azure for Education, and IBM Watson Education offer solutions; open-source tools and edtech startups also compete. You should vet data handling practices and insist on outcome-based pilots before committing districtwide budgets.

Teacher training matters: professional development budgets must include model limitations, prompt design, and bias mitigation. Districts that invest in coach-led PD and real classroom trials achieve higher adoption fidelity. Look to examples from Charlotte-Mecklenburg and LAUSD pilot reports for operational detail.

Start with small, measurable pilots tied to curriculum standards and use established partners like Khan Academy for content alignment. Negotiate data portability and exit clauses when contracting with CompassLearning, Instructure Canvas, or proprietary adaptive vendors. Save funds for infrastructure upgrades—broadband and device cycles remain common blockers.

Measure both equity and efficacy: disaggregate results by race, income, English proficiency, and IEP status. Expect pilots that lift engagement without learning gains—design evaluations to clearly detect those.

Benefits and pros for students and educators

AI benefits at a glance

Personalized pathways, faster formative feedback, and scalable tutoring support that reduce teacher workload and improve accessibility for diverse learners.

- Personalized tutoring (Khanmigo, Carnegie MATHia, DreamBox)

- Immediate formative feedback and analytics

- Automated differentiation and reduced grading time

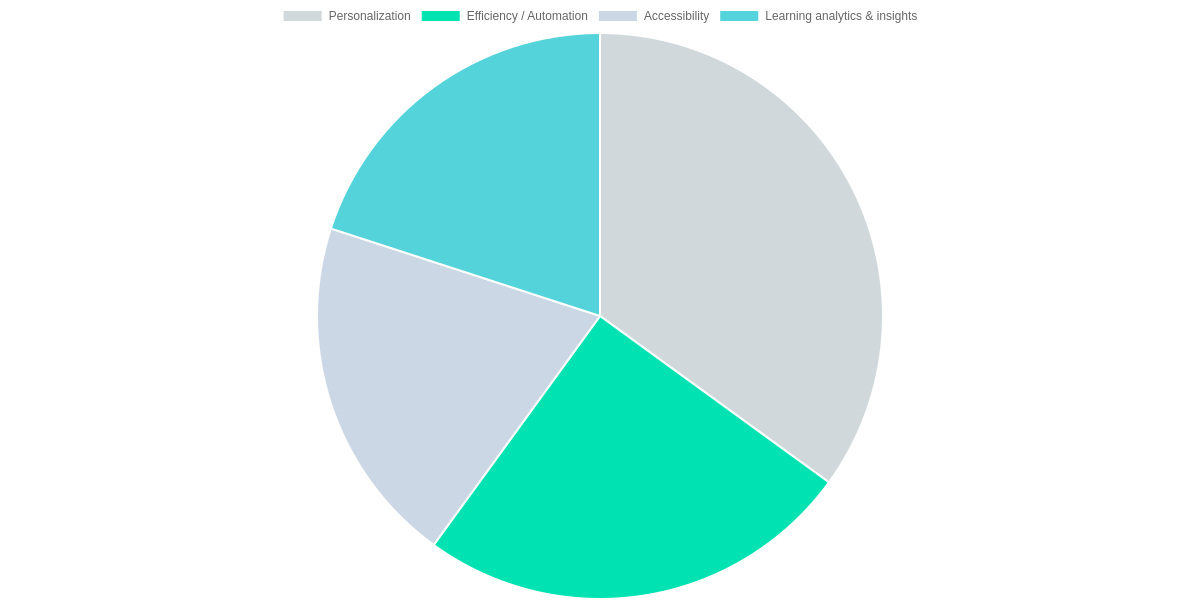

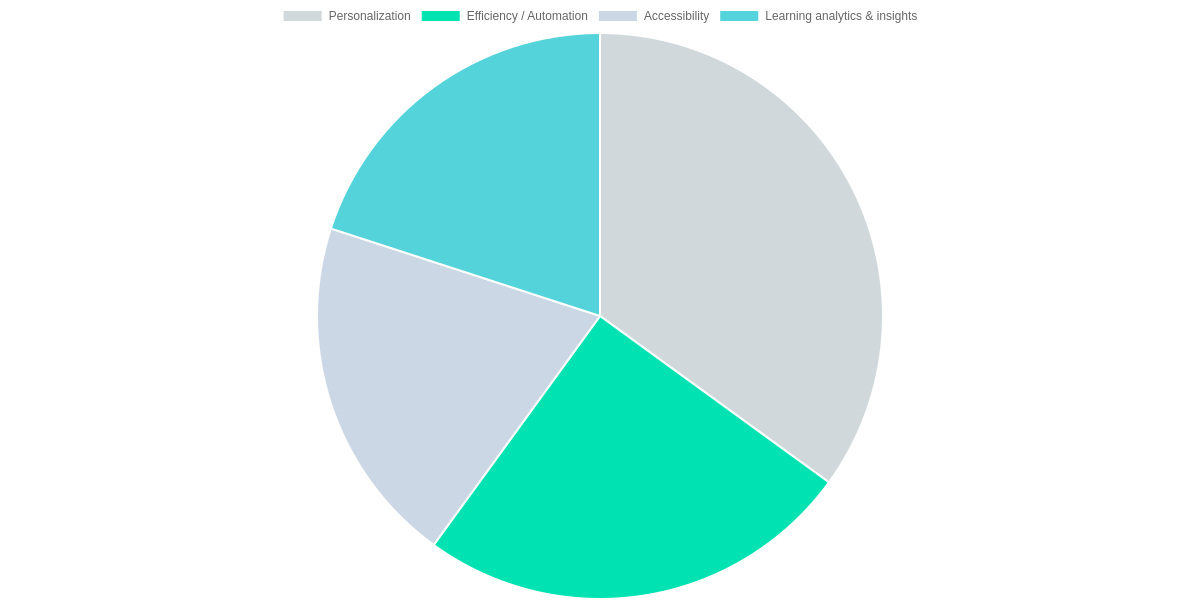

You’ll notice personalization is the largest single benefit: adaptive systems like Khanmigo, Carnegie Learning MATHia, and DreamBox prioritize individualized curricula and pacing. That personalization accounts for roughly 35% of perceived benefit, improving mastery by steering students to the exact practice they need.

Efficiency and automation—about 25% of the distribution—translate to real time savings for you as an educator. Automated grading, auto-generated formative assessments, and content recommendations reduce repetitive tasks so you can focus on instruction and intervention.

Classroom outcomes you can expect

Faster feedback loops mean formative feedback turnaround drops from days to minutes when students use MATHia or DreamBox for practice. You get near-instant diagnostics, which lets you intervene sooner and assign differentiated practice pathways based on live mastery data.

Scalable tutoring support is another concrete gain: Khanmigo-like conversational tutors and built-in DreamBox scaffolds provide 1:1 style help at scale, which supports students who need extra practice without monopolizing teacher time.

| Feature | Khan Academy (Khanmigo) | Carnegie Learning MATHia | DreamBox Learning |

|---|---|---|---|

| Adaptive tutoring | Conversational AI tutor integrated with Khan Academy lessons | Stepwise, skills-focused adaptive engine for math problem solving | Continuous K–8 adaptivity with micro-adjustments |

| Formative feedback speed | Immediate chat-like hints and walkthroughs | Instant, problem-level feedback and diagnostics | Real-time hints, scaffolding, and reteach prompts |

| Teacher dashboard & analytics | Lesson recommendations and progress snapshots | Detailed student models and pacing reports | Class dashboards with mastery insights |

| Deployment & pricing | Free core content; Khanmigo access via paid beta/institutional plans | Paid district or site licenses | Subscription per-student or district licensing |

From a stakeholder perspective, students show measurable learning gains with targeted practice, teachers see workload reduction through automation, and administrators must weigh licensing costs against scalability. Analytics—about 20% of perceived benefit—give actionable evidence to justify those budget decisions without guesswork.

Overall, the biggest wins for you are clearer: personalized learning trajectories, faster formative feedback, and expanded accessibility for diverse learners. Those improvements shift classroom time toward higher-value teaching and make remediation more manageable at scale.

Risks, cons, and equity concerns

You need to weigh AI’s benefits against clear, documented risks. Model bias, accuracy failures, and academic dishonesty create immediate operational challenges in classrooms. These risks are not hypothetical: they affect grading, student trust, and daily lesson design.

Bias in models is a principal concern. When systems trained on historical data echo stereotypes, marginalized students can receive lower-quality feedback or mischaracterized responses. You should insist on bias audits for any system you deploy — whether it’s a tutoring agent like Khanmigo or a general model such as ChatGPT.

Accuracy errors and hallucinations are another persistent problem. ChatGPT and similar LLMs can produce convincing but incorrect answers, which undermines learning if unchecked. Turnitin’s similarity scores also have limits: exact matches are detected well, but paraphrases and context-dependent issues often require teacher adjudication.

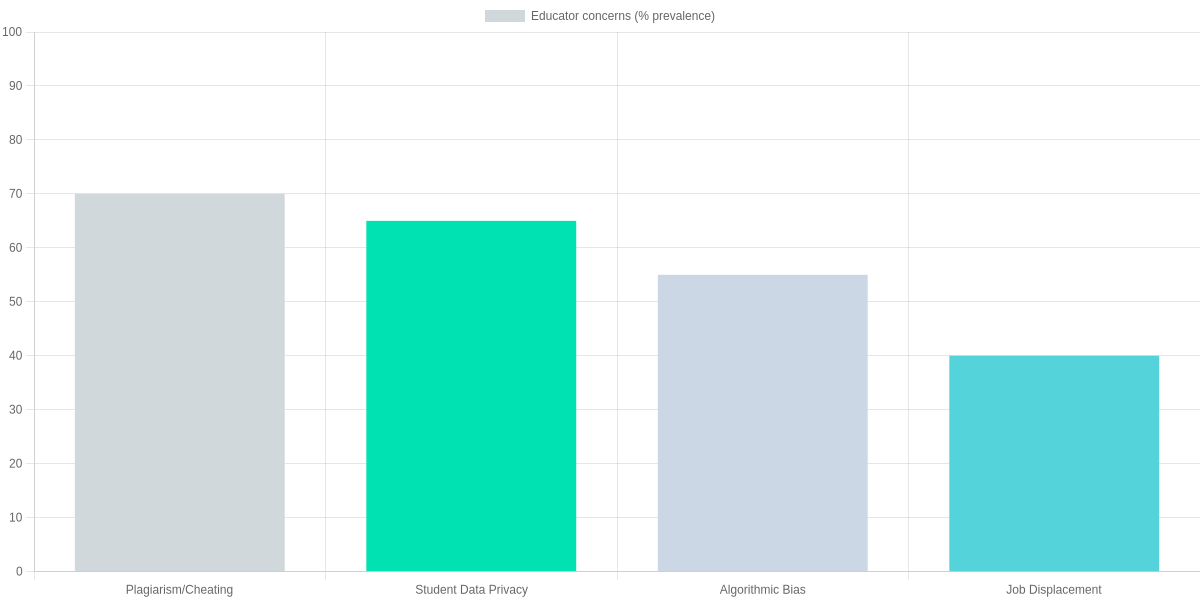

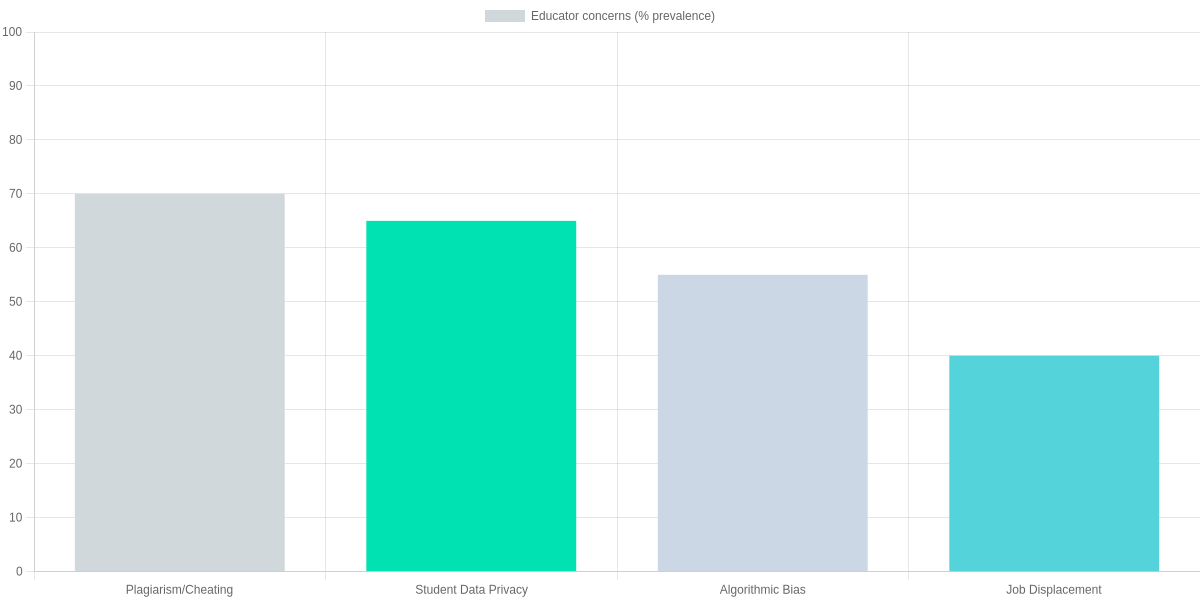

Academic dishonesty has risen as a top concern among educators. In a recent set of educator responses, 70% flagged plagiarism/cheating as a major worry, 65% cited student data privacy, 55% worried about algorithmic bias, and 40% expressed concern over job displacement. The relative prevalence of these worries should shape your risk priorities and policy responses.

Student data privacy and security cannot be an afterthought. Turnitin stores submissions under retention policies that vary by institution; OpenAI’s handling of prompts differs between consumer and enterprise offerings. You must check vendor contracts, FERPA compliance, and local district policies before integrating any tool.

Tool risk comparison

| Feature | Turnitin | ChatGPT (OpenAI) | Khanmigo (Khan Academy) |

|---|---|---|---|

| Primary use | Plagiarism detection & similarity reporting | General-purpose LLM for tutoring, drafting, code | AI tutor integrated with Khan Academy content |

| Common risks | False positives; reliance on similarity scores | Hallucinations; privacy of prompts; inaccurate facts | Knowledge gaps; limited scope outside Khan content |

| Reported accuracy / limitations | High precision for exact matches; struggles with paraphrase detection | Hallucination rates vary; studies report nontrivial factual errors | Curated to curriculum but can omit edge-case explanations |

| Data privacy approach | Processes submissions; Turnitin stores papers (retention policies vary) | OpenAI processes prompts; enterprise/edu options have different retention controls | Khan Academy states data is used to improve products; explicit K-12 safeguards |

| Mitigation strategies | Policy integration, teacher review of flags, human adjudication | Prompt engineering, human verification, API settings for log retention | Restricted domains, teacher oversight, offline alternatives |

Over-reliance on automation is subtle but dangerous. If you let AI generate assignments, grade work, or replace formative assessment without human verification, you risk eroding teachers’ professional judgment. Human-in-the-loop designs are essential across grading, feedback, and curriculum adaptation.

Practical risk-mitigation steps

🔍

Audit for Bias

Run representative prompts and datasets through models (including ChatGPT and Khanmigo) to identify biased outputs before classroom use.

🔒

Protect Student Data

Limit PHI/PII in prompts, enable data-minimization settings in Turnitin and Google Classroom integrations, and enforce local data policies.

👩🏫

Design Human-in-the-Loop Checks

Require teachers to verify AI-generated assessments or feedback; avoid automated grading without oversight.

📶

Address Access Gaps

Provide loaner devices and offline resources where connectivity is limited; prioritize equitable training for teachers across districts.

Equity issues are structural and persistent. The digital divide means some students lack devices or reliable broadband, so adopting ChatGPT-style tools without provisioning will widen achievement gaps. Uneven teacher training across districts further amplifies that problem when high-resource schools use AI effectively and low-resource schools cannot.

If models reuse biased or incomplete datasets, they can amplify historical inequities in discipline, access, and evaluation. You should require vendors to disclose training data provenance and insist on pilot programs that include under-resourced schools. That way, you can spot disparate impacts before scaling any AI tool district-wide.

Policy, implementation, and teacher training

Procurement & Vetting

Assess vendors (OpenAI, Google Cloud, Microsoft Azure) for FERPA/COPPA compliance, security, and interoperability with Canvas, PowerSchool, and Clever.

Phased Pilots

Start with small cohorts, integrate with Google Classroom or Canvas, measure outcomes before district-wide rollouts.

Teacher Training

Provide 20–40 hours PD using ISTE-aligned modules, Google for Education, Microsoft Learn, plus coaching and PLCs.

Policy & Privacy

Adopt FERPA-aligned contracts, ED PTAC guidance, NIST AI RMF for transparency and algorithmic decision logs.

Funding & Infrastructure

Prioritize bandwidth/device upgrades; combine ESSER, federal grants, and state/district budgets for scale.

You must tie procurement to district policy and vendor vetting. Require FERPA/COPPA DPAs, SLAs, and interoperability with Canvas, PowerSchool, Google Classroom, and Clever.

Phase rollouts through pilots with small cohorts, integrate with Canvas or Google Classroom, and evaluate with Panorama Education surveys and LMS analytics.

Training

Allocate 20–40 hours of PD per teacher using ISTE-aligned modules, Google for Education Teacher Center, and Microsoft Learn, plus instructional coaching and PLCs to sustain practice.

Policy & Funding

Adopt ED PTAC guidance and NIST AI RMF clauses for algorithmic transparency, FERPA-compliant contracts, and Turnitin-backed integrity workflows. Blend ESSER, federal grants, state allocations, and district budgets; prioritize devices and bandwidth before scale.

- Pros: Personalization at scale; tools like Khan Academy and Gradescope speed feedback.

- Cons: Data-privacy risks, vendor lock-in, and inequitable access without targeted funding.

Practical tips for schools and educators

Pilot smartly: run a small pilot in one grade or subject using Google Classroom with GoFormative, or Kahoot! and Quizizz paired with Gradescope to test automated formative quizzes and grading and measure classroom impact.

Google Classroom + Formative (GoFormative)

Integrated workflow for assignment distribution, automated formative quizzes, and detailed item analytics.

- • Auto-graded quizzes via Google Forms/GoFormative

- • Classroom roster sync with Google Workspace for Education

- • Use analytics to measure engagement and assessment fidelity

Kahoot! & Quizizz with Gradescope for grading

Interactive game-based learning plus streamlined grading and rubric support to save teacher time.

- • Engagement boosts with Kahoot!/Quizizz live quizzes

- • Gradescope for rubric-based and AI-assisted grading

- • Pilot to measure time saved and equity in outcomes

Set clear goals and metrics: track engagement, time saved, assessment fidelity, and equity indicators (disaggregate by race, income, and IEP status). Use Google Workspace analytics, Formative item reports, and Gradescope time logs to quantify change.

Require human-in-the-loop workflows: you should review AI suggestions, validate rubrics in Gradescope, and approve content rather than relying solely on OpenAI or vendor models.

Invest in professional development and infrastructure: fund ISTE-aligned training, Google for Education Teacher Center courses, reliable Wi‑Fi, single sign-on, and robust devices.

Protect students with transparent vendor contracts: insist on FERPA-compliant data handling, breach notification clauses, and limits on using student data to train models.

Track teacher workload and burnout during pilots.

Pros

- Faster grading and timely feedback

- Improved formative insights

- Scalable personalization

Cons

- Vendor lock-in and opaque models

- Equity gaps if devices are uneven

- Student privacy risks

Frequently Asked Questions

You’ll find concise answers to common concerns about AI in US classrooms per this review. These FAQs weigh practical risks and benefits so you can decide if ChatGPT, DreamBox, or Khan Academy Tutor fit your district. Expect guidance on teacher roles, privacy, equity, vendor evaluation, and academic integrity.

On privacy, the review stresses FERPA compliance when districts use Google Workspace for Education or Microsoft 365 integrations and recommends data processing agreements and third-party audits. For vendor checks, use ISTE and CoSN rubrics, pilot with Turnitin AI review, and demand transparency about training data.

Regarding equity, DreamBox and personalized platforms can narrow gaps if you provide devices and broadband; without investment they may widen disparities. For instruction, you’ll need prompt literacy, assessment design skills, and familiarity with Google Classroom or Canvas. Pros: scalability and tailored practice. Cons: privacy exposure and uneven access. Pilot tools in a controlled study before scaling district-wide.

FAQ Accordion

Will AI replace teachers?

▼

How does AI affect student privacy and FERPA compliance?

▼

Can AI reduce achievement gaps or will it widen them?

▼

How should schools evaluate AI vendors?

▼

What skills do teachers need to integrate AI effectively?

▼

Is student work created with AI automatically plagiarism?

▼

Conclusion

🎯 Key Takeaways

- → AI (Khanmigo, ChatGPT) boosts personalized learning and grading efficiency (Gradescope), improving outcomes when guided by teachers.

- → Risks include privacy (student data with Turnitin/Gradescope), inequity for underfunded districts, and potential bias without oversight.

- → Next steps: pilot Khanmigo and Gradescope integrations, conduct ISTE-aligned equity audits, invest in educator PD, and adopt clear data-use policies.

You should view The Impact of AI on the US Education System as a pragmatic opportunity and risk. Khanmigo and ChatGPT enable adaptive tutoring and scalable feedback, while Gradescope speeds grading and reduces workload for teachers.

Pros include personalized learning, faster assessment, and higher engagement when you use AI alongside instruction. Cons are real: student privacy with Turnitin/Gradescope, widening gaps in underfunded districts, and algorithmic bias without oversight.

Next steps you can take: run small pilots of Khanmigo and Gradescope, require clear data-use contracts, conduct ISTE-aligned equity audits, and invest in teacher PD. Track measurable outcomes and publicize results to build trust.

Pros and Cons

- Pros: personalization, efficiency, scalable feedback.

- Cons: privacy risk, equity gaps, bias.

If you lead adoption, prioritize transparency and metrics so AI amplifies learning rather than replacing judgment. This balanced approach makes the potential of these tools actionable for districts and classrooms.

TL;DR: AI tools like ChatGPT, Khanmigo, Gradescope, and Google Classroom can speed lesson planning, personalize learning, and streamline grading, but their effectiveness and classroom fit vary widely by tool, subject, and implementation. With adoption accelerating (roughly 48% of K–12 districts and 65% of colleges piloting AI), the post urges cautious pilots, robust teacher training, and strong privacy, equity, and governance safeguards to capture benefits while mitigating bias and data risks.

In conclusion, the integration of AI into the academic world is not about replacing human intellect, but about empowering it. The tools we’ve reviewed today—from advanced literature review assistants to precision-based data analysts—offer a glimpse into a future where research is limited only by our curiosity, not our technical constraints.

As we navigate 2026, staying ahead in the academic field requires embracing these innovations responsibly. By choosing the right AI research tools, you can ensure that your work is not only faster but also more robust, credible, and impactful. The journey toward academic excellence is now smarter than ever.

“Which AI research tool has made the biggest impact on your studies so far? Do you have a favorite that we missed? Share your thoughts in the comments below—we’d love to hear your academic success stories!”

I ‘m Md. Osman Goni > Founder of SearchAIFinder and an AI content specialist. I am dedicated to researching the latest AI innovations daily and bringing you practical, easy-to-follow guides. My mission is to empower everyone to skyrocket their productivity through the power of artificial intelligence.”

💬 We’d Love to Hear From You!

Which of these AI tools are you excited to try first? Let us know in the comments below!