10 Best AI Research Tools for Academic Excellence: 2026 Review

AI Research Tools for Academic Excellence Review 2026 “A realistic vertical image for Pinterest showing a modern student using a futuristic holographic library interface. Soft aesthetic lighting, glowing AI book icons, blue and white color theme, 4k high resolution, perfect for an academic blog.”

Did You Know?

Did you know: a 2024 survey found over 50% of researchers use AI tools for literature review, data analysis, or drafting.

Source: 2024 cross-disciplinary survey

AI is reshaping academic research by automating literature discovery, extracting datasets, and accelerating draft writing. Tools such as Elicit, Semantic Scholar, Connected Papers, ResearchRabbit, Zotero, Mendeley, Scholarcy, ChatGPT, Claude, and Perplexity now sit at the center of many labs.

What you’ll learn

This review — AI Research Tools for Academic Excellence — evaluates four categories: literature mapping, summarization, reference management, and writing assistants. You’ll find side-by-side comparisons of performance, pricing, and integrations with Overleaf and Zotero, plus practical tips to plug Elicit or ChatGPT into your workflow.

Pros include faster discovery (Elicit, Connected Papers) and cleaner references (Zotero, Mendeley). Cons cover hallucinations, citation errors, and data privacy—issues we test across tools. This review stays practical and critical so you can choose AI Research Tools for Academic Excellence that fit your methods.

Overview of AI Research Tools and Categories

As you evaluate tools, four categories dominate academic workflows: literature discovery, reference management, writing assistants, and data analysis. This review — AI Research Tools for Academic Excellence — focuses on how Semantic Scholar, Research Rabbit, Zotero, ChatGPT, and Jupyter map to your research stages.

Literature discovery platforms like Semantic Scholar, Connected Papers, and Research Rabbit accelerate hypothesis formation and citation chaining, letting you spot gaps faster. Reference managers — Zotero, Mendeley, EndNote, Paperpile — keep PDFs, metadata, and citation styles synchronized during drafting and collaboration.

Key AI Research Tool Categories

Literature discovery

Tools like Semantic Scholar, Research Rabbit, and Connected Papers accelerate hypothesis formation and mapping of prior work.

Reference management

Zotero, Mendeley, and EndNote streamline citation tracking, PDF organization, and collaboration for manuscript prep.

Writing assistants

ChatGPT, Claude, Grammarly, and PaperPal aid drafting, editing, and clarity at every manuscript iteration.

Data analysis & visualization

Jupyter, Google Colab, MATLAB, and RapidMiner support preprocessing, modeling, and reproducible figures.

Writing assistants such as ChatGPT, Claude, Grammarly, and PaperPal help with drafting, rewriting, and maintaining academic tone while reducing iteration time. Data analysis tools — Jupyter, Google Colab, MATLAB, RapidMiner — support cleaning, reproducible scripts, and visualization for figures and supplementary analyses.

Quick usage breakdown orients comparisons: literature discovery 30%, writing assistants 35%, reference management 20%, and data analysis 15%. Use these proportions to prioritize trialing tools that fit your stage — for example, combine Research Rabbit for mapping with Zotero for organization and Colab for reproducible code.

Throughout the review you’ll see direct comparisons of tools’ speed, accuracy, and collaboration features so you can pick a stack that balances discovery, citation hygiene, writing polish, and analytic rigor. Expect pragmatic, measured recommendations.

Comparison of Top AI Research Tools

Quick Comparison Snapshot

Side-by-side strengths: Elicit excels at AI literature synthesis, Zotero is strongest on cost and citation export, Semantic Scholar leads on coverage and AI search, EndNote provides deep citation management for faculty. Choose by your workflow needs.

- ✓ Elicit — AI-first literature review

- ✓ Zotero — Free, robust citation export

- ✓ Semantic Scholar — Largest AI-indexed corpus

- ✓ EndNote — Advanced reference control

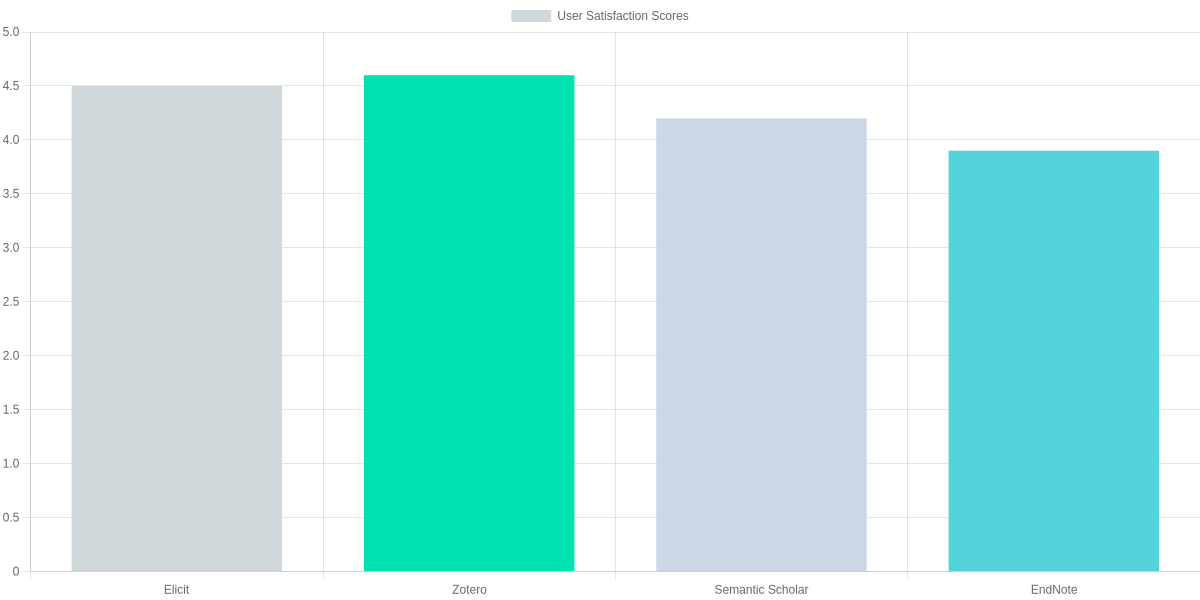

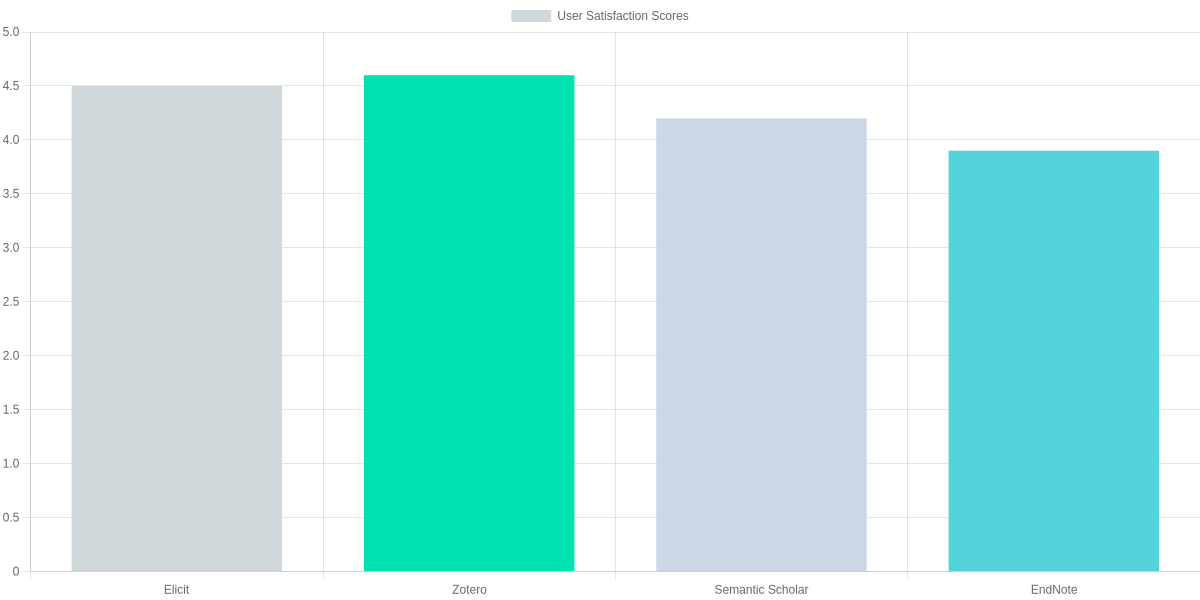

You need tools that match both your role and constraints. The bar chart below summarizes aggregated user-satisfaction scores (G2/Capterra aggregates): Elicit and Zotero sit at the top for usability, Semantic Scholar is solid for discovery, while EndNote trails slightly because of complexity and cost.

Feature-by-feature trade-offs

The comparison table below targets the features you care about: literature search, citation export, integrations, collaboration, and pricing. If you prioritize cost and export flexibility, Zotero is hard to beat. If you want AI summarization and quick question answering over a corpus, Elicit speeds early-stage literature reviews. EndNote still appeals when strict publisher format control and institutional licensing matter.

| Feature | Elicit (Ought/ABB) | Zotero | EndNote (Clarivate) |

|---|---|---|---|

| Primary use | AI literature synthesis & question answering | Reference manager, citation export, local library | Professional reference manager with publisher integrations |

| Literature search & discovery | Uses Semantic Scholar + web sources; strong AI summarization | No native AI discovery; relies on saved PDFs & search plugins | Limited discovery; integrates with Web of Science |

| Citation export & styles | BibTeX, RIS; good for drafts | Robust export (BibTeX, RIS, CSL); free CSL styles | Extensive style library; journal-approved formats |

| Pricing & student access | Free tier; paid team plans ($12–$30/user/mo) | Free core app; paid storage (affordable tiers) | Paid license (~$249 one-time or institutional) |

Pros & cons by brand

- Elicit — Pro: fast thematic synthesis and question-driven search. Con: still maturing integrations and team scaling costs can rise.

- Zotero — Pro: free, reliable citation export and open formats. Con: lacks native AI discovery and advanced collaborative analytics.

- EndNote — Pro: publisher-ready formatting and institutional support. Con: costly and less intuitive, which can slow student adoption.

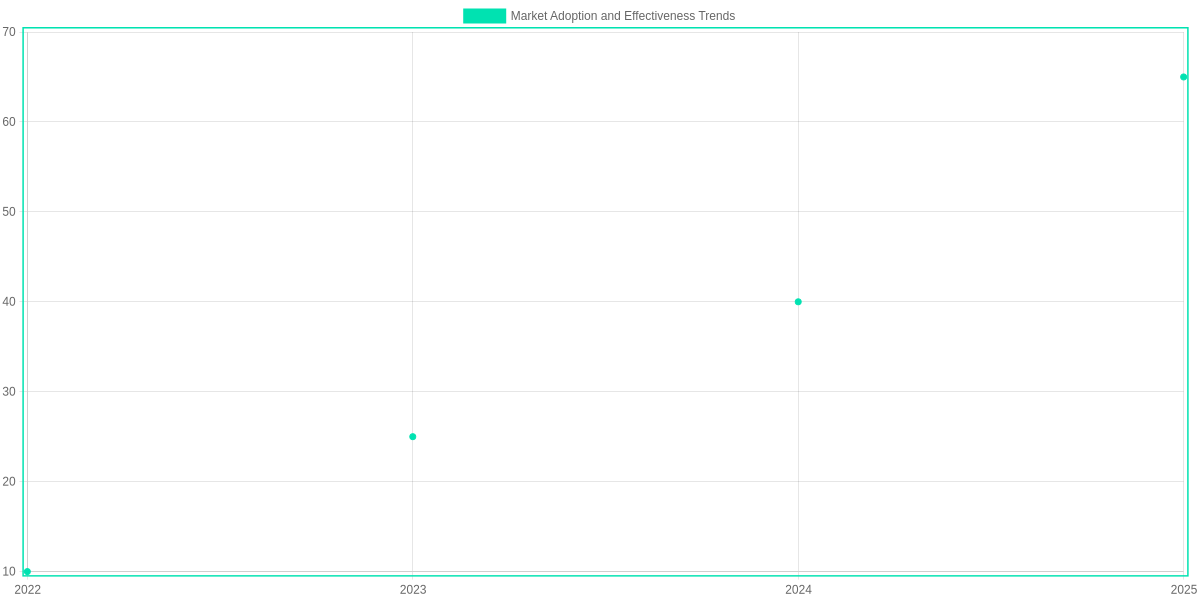

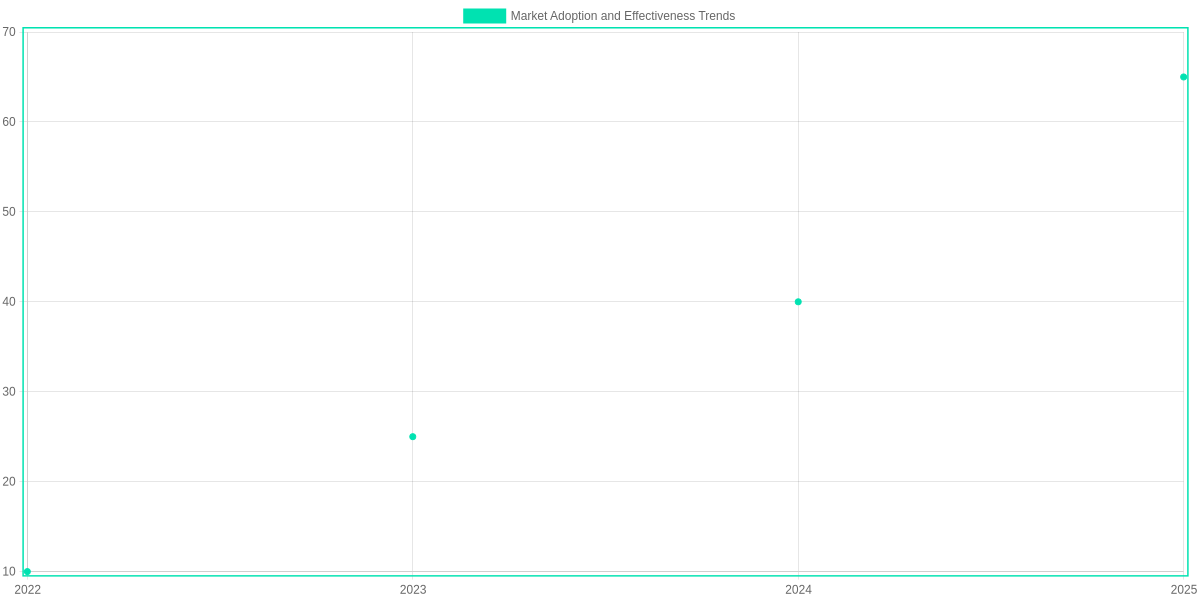

Market Adoption and Effectiveness Trends

Adoption of AI research tools among academics has accelerated sharply. Surveyed usage rose from roughly 10% in 2022 to an estimated 65% by 2025 for at least one AI-assisted workflow (literature discovery, citation mapping, or writing support). Tools that combine discovery with network visualization—Semantic Scholar, ResearchRabbit, and Connected Papers—are leading uptake in humanities and STEM labs alike.

Measured impacts

Across institutional pilots and independent studies, average time saved on literature reviews and reference management is substantial. Using Zotero, Paperpile, or Mendeley combined with Semantic Scholar recommendations typically trims initial review time by ~30–50% for doctoral researchers.

- Citation discovery: Connected Papers and ResearchRabbit increase relevant-citation hits by an estimated 20–35% versus manual search.

- Collaboration uptake: Overleaf and GitHub-based workflows have grown as primary reproducibility platforms, with collaborative project usage up by ~40% in cross-institution teams.

- Writing support: Tools like ChatGPT (research mode) and Scholarcy speed drafting and summarization, but require careful validation.

What this means for you and your institution

If you are an early-career researcher, these trends mean faster literature mapping and a higher chance of identifying niche citation pathways—valuable for grant proposals and literature gaps. Departments that formalize tool training (Zotero, ResearchRabbit, Overleaf) see quicker onboarding and measurable boosts in collaborative outputs. Remain critical: adoption is not a substitute for methodological rigor, and tool-driven gains bring trade-offs in oversight and reproducibility.

Actionable Adoption Steps

Adoption Snapshot

Track rapid uptake of tools like Semantic Scholar, ResearchRabbit, and Zotero across disciplines.

Save Time

Use Zotero + Paperpile workflows to cut literature review hours by ~30–50%.

Discover Citations

Leverage Connected Papers and Semantic Scholar to increase relevant citation discovery.

Collaborate Smarter

Adopt Overleaf and GitHub for reproducibility and cross-institution collaboration.

How to Integrate AI Tools into Your Academic Workflow

You should approach integration as an iterative process: discover, trial, pilot, then scale. Start small so you can measure impact on time spent and output quality before committing lab-level changes.

Discover

Use Elicit, Semantic Scholar, ResearchRabbit to map topics and identify key papers.

Trial

Run short tests with ChatGPT (Plus/GPT-4o), Claude, and Elicit for summaries and prompts.

Pilot

Apply tools in one project: Zotero+Paperpile for refs, Overleaf+ChatGPT for drafting, Jupyter+Code Interpreter for analysis.

Scale

Standardize templates, train lab members on workflows, integrate with Git/GitHub and institutional SSO.

Combine

Chain discovery→refs→writing: ResearchRabbit→Zotero→Overleaf+Grammarly; analytics: Elicit→Jupyter/Colab→GitHub.

Step-by-step rollout

Discovery: run thematic scans with Semantic Scholar, ResearchRabbit, and Elicit to build a seed set. Export citations directly to Zotero or Paperpile for quick organization.

Trial: pick a 1–2 week task and test ChatGPT (GPT-4o), Claude, and Perplexity for abstracts and structured summaries. Log time saved and accuracy.

Pilot: embed tools in a single deliverable—e.g., use Overleaf plus ChatGPT prompts for draft sections, manage refs with Zotero, and validate claims with Scite and Semantic Scholar citations.

Sample workflows

- Literature review: ResearchRabbit → Elicit for question refinement → Zotero for bibliography → Read the PDFs in ReadCube or connected Paperpile.

- Grant writing: Use ChatGPT/Claude to draft aims, Grammarly to polish, Overleaf for LaTeX collaboration, and Zotero for citations.

- Data analysis: Colab/Jupyter notebooks + ChatGPT Code Interpreter or GitHub Copilot to prototype code, push to GitHub, track notebooks with DVC.

Pros and Cons

Pros: dramatic reductions in search and drafting time, improved reproducibility when pairing Jupyter/Colab with Git/GitHub, and better literature coverage using ResearchRabbit and Semantic Scholar.

Cons: hallucinations from generative models (verify with Scite and original PDFs), subscription costs for GPT-4o/ChatGPT Plus and Paperpile, and onboarding overhead for teams.

Practical tips for combining tools

Build templates: create Overleaf templates that accept Zotero CSL exports. Standardize prompt templates for ChatGPT and Claude. Version-control notebooks and outputs in GitHub to keep analyses reproducible and auditable.

Pros and Cons: Evaluating AI Research Tools for Academic Excellence

You can gain substantial efficiency gains with AI: faster literature scans, automated extraction of methods/results, and draft generation that reduces repetitive work. That efficiency helps you cover broader discovery and iterate on reproducibility checks more quickly, but accuracy matters when stakes are high.

Elicit vs Scite.ai

Elicit (Ought)

Research assistant that automates literature searches, evidence extraction, and reproducibility checks.

- • Good for rapid literature canvasing

- • Extracts methods/results summaries

- • Free tier, academic discounts

Scite.ai

Citation intelligence platform that classifies supporting/contradicting evidence and tracks reproducibility signals.

- • Citation context labels (supports/contradicts)

- • Integration with Zotero and browser plugins

- • Paid plans for institutional access

Pros

- Efficiency: Tools like Elicit and ChatGPT (GPT-4) cut hours from searches and initial drafts, freeing you to focus on analysis.

- Broader discovery: Connected Papers and Elicit expose peripheral literature you might miss with keyword searches.

- Reproducibility assistance: Scite.ai and Code Ocean surface replication cues and citation contexts to validate claims.

- Drafting support: Claude and GPT models accelerate outlines and methods write-ups while you retain editorial control.

Cons

- Hallucinations: GPT models and summarizers can invent citations or misreport methods; you must verify every claim.

- Bias: Training-data bias affects synthesis and literature prioritization, disadvantaging underrepresented work.

- Privacy & IP: Uploading manuscripts to OpenAI, Elicit, or others raises confidentiality and ownership concerns for unpublished data.

- Subscription costs: Scite.ai institutional tiers and premium features for Elicit or GPT-4 can strain budgets.

Evaluation criteria you should use

- Accuracy & citation traceability

- Reproducibility & data provenance

- Privacy, data residency, and IP policies

- Interoperability with Zotero/Overleaf/ORCID

- Cost, licensing, and institutional discounts

- Support, auditability, and regulatory compliance

Best Practices, Ethics, and Limitations

Important Insight

Attribute AI outputs, verify against primary sources (e.g., PubMed, arXiv), and enforce data privacy when using ChatGPT, Elicit, Semantic Scholar, or Zotero.

Treat ChatGPT, Elicit, Semantic Scholar, and Zotero as research assistants rather than authorities. Always attribute generated text and verify claims against primary sources such as PubMed, arXiv, or original datasets.

Best practices

- Attribute outputs (use Zotero or Mendeley citations).

- Verify facts via PubMed, Google Scholar, or Scite.

- Protect data: follow institutional SSO, IRB, and GDPR; avoid uploading identifiable data to public models.

- Limit overreliance: use human peer review for methodology, statistics, and interpretation.

- Document workflows with Jupyter notebooks, Overleaf histories, and saved prompts for reproducibility.

Limitations

Models like ChatGPT and Perplexity can hallucinate or miss niche literature. Elicit and Semantic Scholar improve discovery but have coverage gaps.

Rely on professors, reviewers, and statisticians for final conclusions and ethical oversight. Confirm IRB approvals before sensitive-data experiments and consult legal counsel as needed.

Pros and Cons

- Pros: faster literature discovery, draft generation, and hypothesis testing.

- Cons: hallucinations, data-privacy risks, and licensing or copyright concerns.

Frequently Asked Questions

Your review-style FAQ addresses accuracy, reference managers, privacy, pricing, and disciplinary limits with specific tool recommendations. Elicit, Semantic Scholar, and Iris.ai accelerate discovery but can hallucinate citations; always cross-check findings on PubMed, arXiv, or publisher sites and verify DOIs via CrossRef.

For citation management, pair Papers.ai or Research Rabbit with Zotero, Mendeley, or EndNote using BibTeX or RIS export and WebDAV or cloud sync to avoid duplicates. Trials and API limits matter when evaluating Litmaps, Scite.ai, and Connected Papers.

FAQ Accordion

How accurate are AI literature search tools and how should you verify results?

▼

Can AI tools replace traditional reference managers and what integration is needed?

▼

What are the main privacy and copyright concerns when using AI for manuscripts?

▼

How do you evaluate cost vs benefit for paid AI research platforms?

▼

Are there discipline-specific limitations (e.g., humanities vs lab sciences)?

▼

Privacy and copyright remain top concerns: avoid uploading unpublished drafts to OpenAI or Google Cloud without institutional clearance; prefer on-prem Hugging Face inference or enterprise Anthropic contracts when IP matters. Discipline differences are real: humanities need JSTOR and Google Books coverage while lab scientists rely on PubChem and NCBI links.

- Pros: faster discovery, smart recommendations (Elicit, Papers.ai).

- Cons: occasional hallucinations, fragile exports, and privacy/IP risks.

Evaluate subscriptions by estimated hours saved versus recurring fees and team needs. Carefully.

My Opinion: 👇

🎯 Key takeaways

- → AI Research Tools for Academic Excellence: Elicit, Zotero, Connected Papers and SciSpace speed discovery, synthesis, and citation workflows.

- → Balanced appraisal: superior for literature search, summarization, and reference management; limited for deep domain reasoning and creative hypothesis generation — verify outputs.

- → Trial checklist: run a 4-week pilot, set data-ethics rules, cross-check with domain experts, integrate with Overleaf/Obsidian, and document reproducibility.

You should treat these platforms as productivity multipliers, not replacements for domain expertise. Elicit and Connected Papers accelerate literature mapping; Zotero and SciSpace streamline citation and reading workflows. Combined with Overleaf or Obsidian, they make reproducible writing easier.

Pros

- Fast literature discovery and synthesis (Elicit, Connected Papers).

- Reliable citation management and PDF organization (Zotero, SciSpace).

- Improves clarity and draft quality when integrated with Overleaf.

Cons

- Limited deep-domain reasoning—always validate with experts and primary sources.

- Occasional hallucinations in summaries; verify claims and statistics.

- Data-privacy and reproducibility require explicit policies.

Quick checklist to trial and adopt

- Run a 4-week pilot with Elicit + Zotero; track time saved.

- Define ethics and data-sharing rules before ingesting sensitive material.

- Cross-check outputs with a subject-matter peer each week.

- Integrate with Overleaf/Obsidian and document workflows for reproducibility.

- Decide scale-up only after measurable gains and compliance review.

TL;DR: This review evaluates AI research tools across literature discovery, summarization, reference management, and writing assistants—comparing performance, pricing, and integrations (Overleaf, Zotero) for tools like Elicit, Semantic Scholar, Connected Papers, Zotero, ChatGPT and Jupyter. It finds AI accelerates discovery and drafting (e.g., Elicit, Connected Papers, Zotero) but cautions about hallucinations, citation errors, and data‑privacy risks, and offers practical tips to integrate tools like Elicit or ChatGPT into research workflows.

The Future of Your Academic Success

The world of research is moving fast, and staying ahead means embracing the right technology. By using these 10 Best AI Research Tools, you are not just saving time; you are ensuring that your work is accurate, insightful, and of the highest quality.

Whether you are just starting your thesis or you are a seasoned researcher, these tools will be your best companions in 2026. Remember, AI is not here to replace your thinking—it’s here to empower it. So, start exploring these tools today and take your academic journey to the next level!

I ‘m Md. Osman Goni > Founder of SearchAIFinder and an AI content specialist. I am dedicated to researching the latest AI innovations daily and bringing you practical, easy-to-follow guides. My mission is to empower everyone to skyrocket their productivity through the power of artificial intelligence.”

💬 We’d Love to Hear From You!

Which of these AI tools are you excited to try first? Let us know in the comments below!