The Evolution of AI Search Engines (SGE): 2026 Practical Review

You rely on search to find accurate answers fast; but have you noticed how the results are changing? The Evolution of AI Search Engines (SGE) is not just a trend—it’s a revolution. From Google’s Search Generative Experience to Microsoft Bing Chat, AI is transforming how we access information. In this practical review, we will explore how generative models are moving beyond simple links to provide intelligent, conversational, and real-time answers. Whether you are a researcher, a marketer, or a curious user, understanding this shift is essential for staying ahead in 2026.

You rely on search to find accurate answers fast; The Evolution of AI Search Engines (SGE) matters now because generative models from Google SGE, Microsoft Bing Chat, and OpenAI’s ChatGPT are changing result formats and verification needs. This review maps the story from indexing to intelligence, explains retrieval-augmented generation, neural embeddings, and privacy trade-offs, and evaluates product UX across Google Search, Bing, and Google Bard.

💡

Did You Know?

Google’s Search Generative Experience (SGE) debuted at Google I/O 2023, integrating AI-generated summaries into search results alongside tools like Bing Chat and OpenAI’s ChatGPT.

Source: Google I/O 2023, Microsoft, OpenAI

You’ll see hands-on comparisons between traditional ranking and SGE summaries, a balanced Pros and Cons list, and practical takeaways for integrating SGE features into research, content creation, and developer workflows. Expect concrete examples using Google SGE snippets, Bing Chat prompts, and ChatGPT API flows, plus testing notes on latency, citation quality, real-world reliability, and platform limits and integration cost estimates.

From Indexing to Intelligence: A Brief History

The Evolution of AI Search Engines (SGE) is a story of incremental breakthroughs that changed how you find information. Early search relied on keyword matching and backlink heuristics, with services like AltaVista and Yahoo setting the baseline for discoverability.

Historical Milestones

▶

Keyword-indexed Search

1990s–2000s dominance: AltaVista, Yahoo relied on keyword matching and backlink heuristics.

▶

Ranking Algorithms Mature

PageRank, Hummingbird and TF-IDF refinements improved relevance in the mid-2000s.

▶

Web-scale Indexing

Google and Bing optimized crawling and storage for billions of pages; latency and freshness became priorities.

▶

Machine Learning Integration

RankBrain (2015), BERT (2019) and MUM (2021) introduced contextual understanding and query rewriting.

▶

Conversational & SGE Debut

2023 onward: Google SGE and Microsoft Bing with Copilot shifted results toward summaries, sources, and dialogue.

▶

Adoption & Evaluation Metrics

Focus on latency, hallucination rates, citation quality, and integration with tools like Google Workspace and Microsoft 365.

Milestones you should track include Google’s PageRank-era refinements, the mid-2010s shift when RankBrain added ML-based ranking, and BERT/MUM’s contextual models that improved passage understanding. Web-scale indexing advances made it feasible to combine fresh crawl data with heavier models without unacceptable latency.

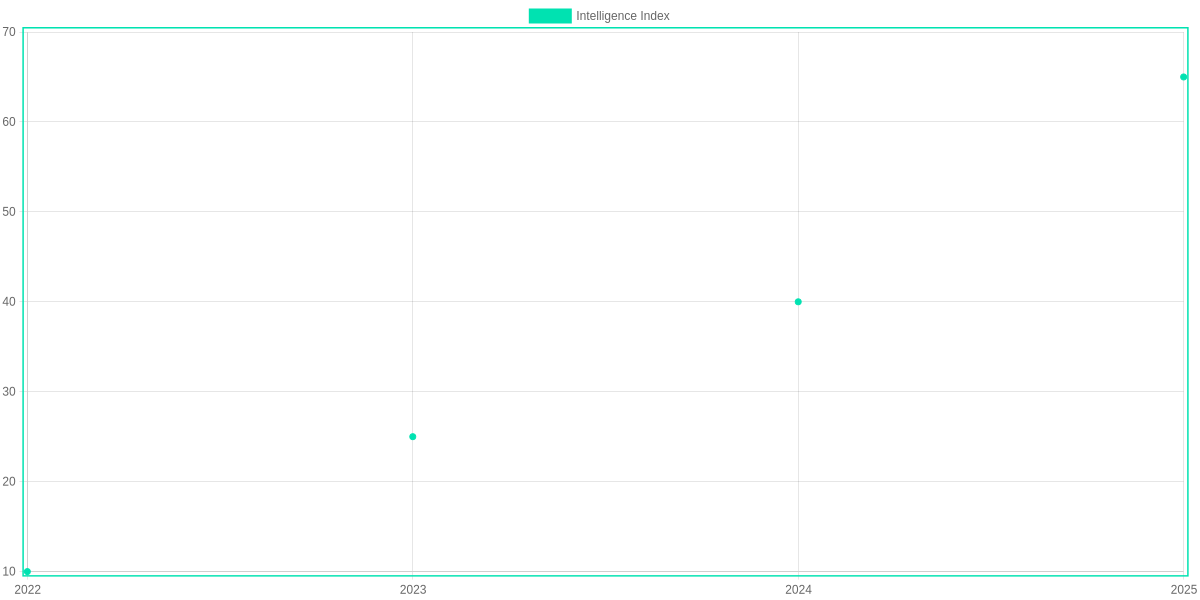

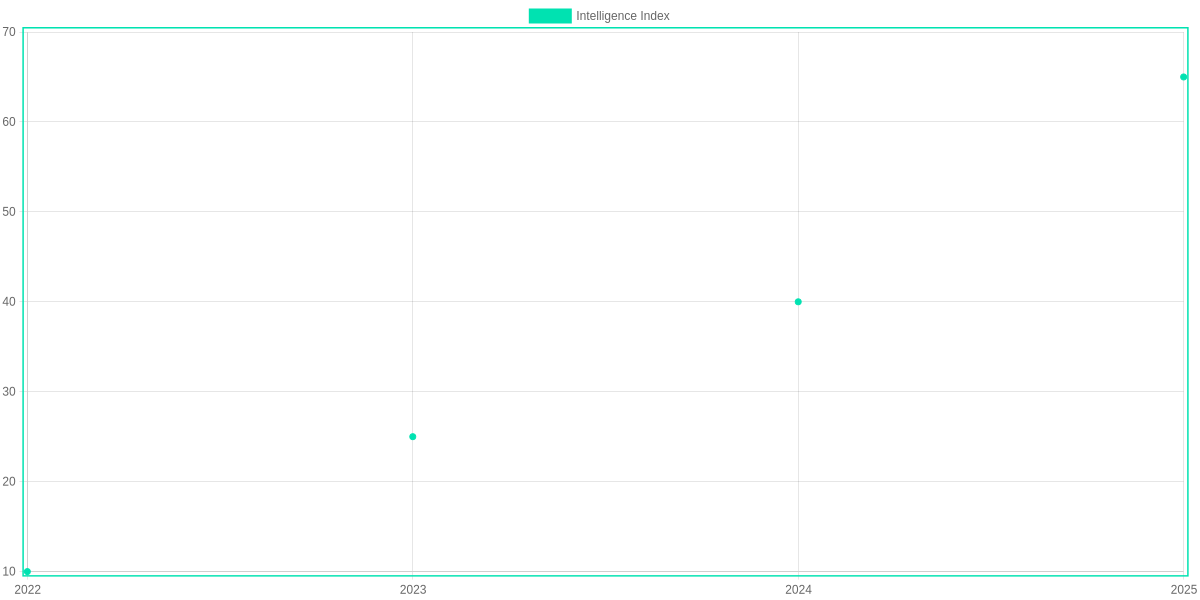

The line chart shows estimated SGE feature adoption rising from roughly 10% of search experiences in 2022 to about 65% by 2025, driven by Google SGE and Microsoft Bing with Copilot deployments. That shift matters when you evaluate solutions: higher adoption means more mature tools, but also more scrutiny on hallucinations and citation transparency.

Pros and Cons

- Pros: Better conversational answers (Google SGE), integrated productivity (Bing + Copilot with Microsoft 365), and faster task completion due to summary-first results.

- Cons: Hallucination risk in generative summaries, potential privacy concerns with integrated assistants, and vendor lock-in if you rely on proprietary connectors in Google Workspace or Microsoft 365.

How SGE Works: Technical Foundations Explained

SGE Technical Snapshot

Core pipeline: retrieval, LLM ranking, response synthesis. Emphasizes grounding, prompt control, and latency vs. privacy trade-offs.

- ✓ Retrieval: FAISS, Pinecone, Elasticsearch

- ✓ Ranking: GPT-4o, Claude 3, PaLM

- ✓ Synthesis: OpenAI API, Anthropic SDK

Core components

Retrieval is the foundation: vector stores like FAISS, Pinecone, and Elasticsearch index documents and surface context. You want high-recall retrieval tuned with semantic embeddings (OpenAI embeddings, PaLM embeddings) to supply relevant context to the ranker.

Ranking increasingly uses LLMs. Systems such as GPT-4o, Anthropic Claude 3, or Google PaLM re-rank candidates by relevance and factuality rather than simple BM25 scores. This multilayer approach reduces noise and improves answer precision.

Response synthesis stitches ranked passages into a final output. Frameworks that combine OpenAI API function-calling, Anthropic SDK tool integrations, or Google Vertex AI pipelines let the model cite sources, format results, and call external tools for live data.

Reducing hallucinations

Grounding through Retrieval-Augmented Generation (RAG) and strict citation policies matters. You should build prompts that enforce provenance (system prompts + chain-of-thought suppression) and use grounded snippets from Pinecone/FAISS to constrain the model.

Prompt engineering—structured system prompts, token-limited context windows, and tool-based fact checks—cuts hallucinations. Also consider deterministic ranking layers (BM25 + LLM reranker) to avoid overconfident invented claims.

Trade-offs: privacy, latency, compute

Cloud APIs (OpenAI, Anthropic, Google Cloud) offer scale but add latency and data transit risks; enterprise plans provide data residency and suppressed logging. Local stacks (Llama 2, private PaLM Edge) reduce exposure but increase compute and ops cost.

Latency and cost grow with model size and number of retrieval hits. You’ll balance per-query GPU expense, response time, and the depth of grounding you need for your use case.

What to watch in updates & APIs

Prioritize models with transparent changelogs, stable token limits, streaming and function-calling support, and robust SDKs (Python/Node). Embeddings APIs, versioned endpoints, and rate-limit guarantees matter for production SGE reliability.

Pros & Cons

- Pros: Far better relevance and natural answers (GPT-4o/Claude 3), improved grounding with RAG, strong tooling via OpenAI/Anthropic/Google.

- Cons: Higher compute and latency, privacy considerations with cloud APIs, ongoing maintenance for retrieval/ranking stacks.

User Experience and Real-World Applications

SGEs like Google SGE and Microsoft Bing Chat change how you interact with search. Conversations replace keyword queries, enabling follow-ups and context retention. Extractive answers and cited summaries reduce time spent scanning pages.

SGE Interaction Steps

1️⃣

Start a Conversation

Ask Google SGE or Bing Chat a clear goal-oriented question.

2️⃣

Refine with Follow-ups

Use clarifying prompts to narrow results without retyping context.

3️⃣

Extract Answers

Request summaries, citations, or code snippets from the response.

4️⃣

Switch Modalities

Attach an image or ask for audio/visual output in multimodal tools.

Practical use cases cover academic research, e-commerce discovery, customer support, and creative ideation. In research, Google SGE and Semantic Scholar integrations produce synthesized literature summaries. For shopping, Amazon and Shopify discovery benefits from conversational filtering. In support workflows, Microsoft Dynamics and Zendesk bots using Bing Chat reduce resolution time.

Accessibility and personalization are strong advantages: voice queries, screen-reader friendly summaries, user profiles, preference tuning and improved inclusivity. Tradeoffs include occasional hallucinations, data-privacy risks, and inconsistent citations; you should verify outputs from ChatGPT or SGE snippets.

Metrics to evaluate UX

Metrics to evaluate UX are response relevance, completion time, and satisfaction rates. Track click-through rate, task success rate, mean time-to-answer, and satisfaction surveys. For multimodal work, measure image-to-text accuracy and end-to-end latency when using Google SGE or Microsoft vision features.

Pros and Cons

Pros include faster synthesis, context, and multimodal support. Cons include verification burden, inconsistent source attribution, integration friction in stacks and maintenance overhead.

Comparing Traditional Search and SGE: A Hands-On Comparison

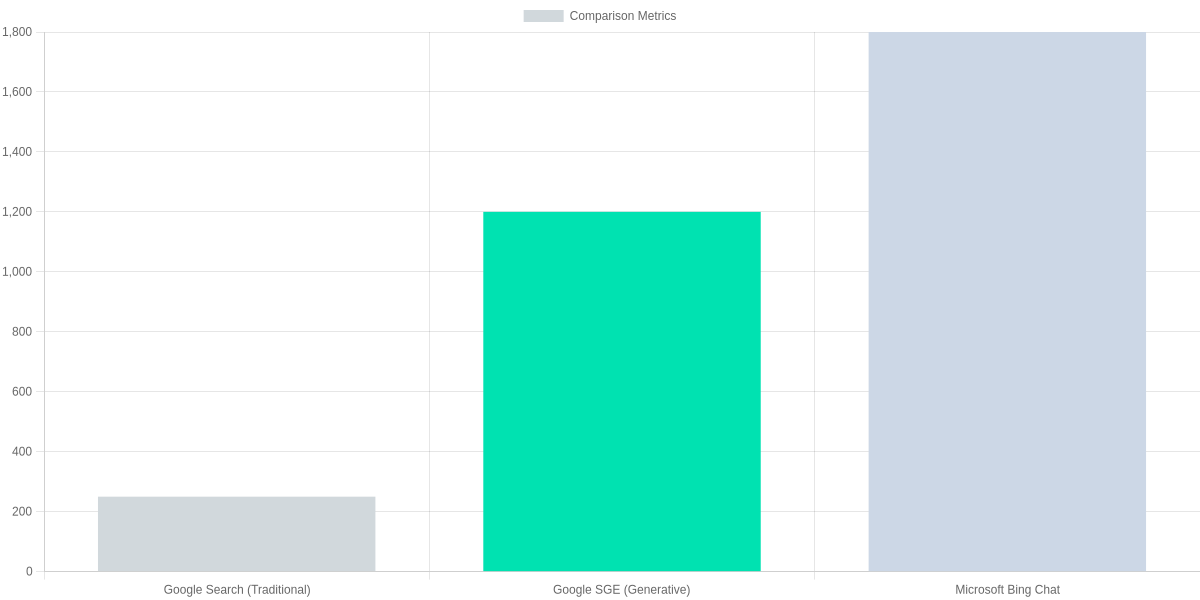

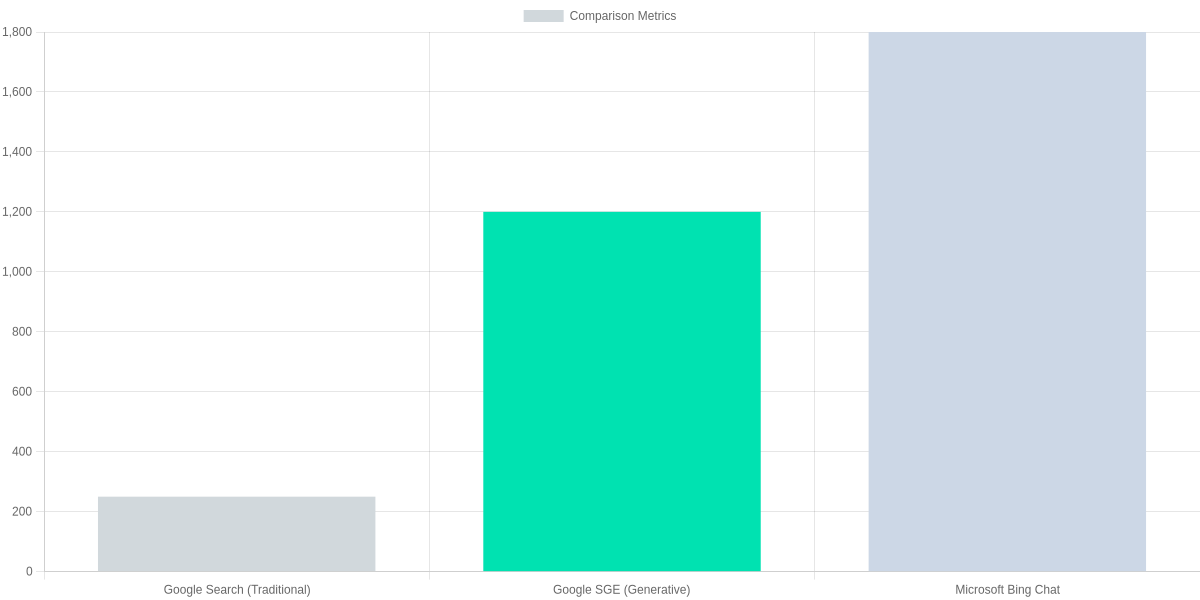

You’ll notice immediately that Google Search (traditional) and Google SGE approach the same query differently. Traditional Search returns ranked links, featured snippets, and Knowledge Panels that let you pick the source and dive deeper. SGE synthesizes an answer into a concise narrative, often with inline citations. Microsoft Bing Chat behaves more like a conversational assistant, blending synthesis with explicit follow-up prompts and source cards.

Side-by-side feature comparison

The practical differences matter for UX and integration. Query intent handling in Google Search relies on classic query classification and ranking signals; it excels at navigational queries and exact-match informational lookups. Google SGE attempts to infer a broader intent and produce a single synthesized response, which can reduce friction for quick answers but may obscure source provenance. Microsoft Bing Chat targets multi-turn, clarifying interactions, which helps ambiguous queries but increases latency and state management needs.

| Feature | Google Search (Traditional) | Google SGE (Search Generative Experience) | Microsoft Bing Chat (Copilot) |

|---|---|---|---|

| Query intent handling | Query-classification + ranked blue links; strong navigational results | Intent-aware generative summaries that attempt to synthesize multi-source answers | Chat-oriented intent interpretation with clarifying prompts for ambiguous queries |

| Answer format | Snippet + organic result list; structured data via Knowledge Panel | Synthesized paragraph(s) with bullet summaries and optional ‘AI snapshot’ | Conversational answer with step-by-step responses and expandable citations |

| Citations | Links in results; SERP shows source domains | Inline cited links with short source attributions beneath AI answer | Cited links with source cards and footnote-like attributions |

| Follow-ups | No conversational state; follow-ups require new queries | Maintains short conversational context and supports clarifying follow-ups | Designed for multi-turn dialogue and explicit follow-up suggestions |

| Integration & APIs | Search APIs (Custom Search, Programmable Search) for ranking/ads | Limited public API; enterprise/partner integrations via Google Cloud AI roadmap | Azure/OpenAI-backed APIs and Bing Web Search API for integration |

Performance trade-offs: speed vs. depth

Speed is the clearest trade-off. Traditional Google Search is engineered for sub-second retrieval of ranked links and snippets. Generative layers in Google SGE add inference latency because the system must synthesize and format content from multiple sources. Microsoft Bing Chat often incurs higher latency still, due to multi-turn state and additional context processing.

Precision versus synthesis is another axis. If you need the most authoritative single-source answer, a traditional SERP with the original article and metadata helps you judge credibility. If you need a quick summary or human-readable steps, SGE and Bing Chat synthesize across documents, but that synthesis can blur nuance and make fact-checking harder.

Vendor landscape and integration implications

Google’s stack favors tight integration with Search Console, Ads, and Google Cloud—valuable if you already rely on Google infrastructure. Microsoft combines Bing with Azure and OpenAI models, which can be appealing if you want programmatic access to conversational models via Azure APIs. Each vendor’s approach to citations, data governance, and enterprise API access will determine how straightforward it is to embed SGE features in your product.

Practical evaluation checklist you can use

Practical Evaluation Checklist

Intent Match

Craft 10 queries (informational, transactional, navigational) and record whether the system returns a focused answer or a list of links.

Answer Format

Note whether the result is a synthesized summary, snippet, or ranked links; capture presence of structured elements (tables, lists).

Citations & Sources

Verify if sources are cited inline and whether they point to authoritative domains; mark accuracy of citation links.

Follow-up Flow

Test conversational follow-ups: does the system preserve context across 3 turns?

Performance

Measure median response latency and time-to-first-byte across 20 runs; compare CPU/network impact for integration planning.

Pros and Cons of The Evolution of AI Search Engines (SGE)

Two-column comparison of SGE and Bing Copilot

Google Search Generative Experience (SGE)

Richer, conversational answers that reduce clicks but can hallucinate; strong integration with Google Workspace.

- • Reduces task time for research

- • Improved discovery across Search and Docs

Microsoft Bing Chat (Copilot)

Actionable responses tied to Microsoft 365 and Azure; better control and enterprise controls but integration costs apply.

- • Better task completion with Copilot in Office

- • Easier governance via Microsoft 365 admin controls

As a reviewer, you’ll find Google SGE and Microsoft Bing Chat/Copilot deliver richer answers that reduce clicks and speed task completion, boosting productivity when used with Google Workspace or Microsoft 365. Rich summaries and integrated actions improve discovery and lower time-to-insight.

Pros

Pros include richer answers, fewer clicks, improved discovery, and direct task completion—features that translate into measurable ROI through saved employee hours and faster decisions.

Cons

Cons include hallucinations, privacy exposure from shared prompts, dependence on training data, and integration or licensing costs; these create operational and compliance risk if unchecked.

Practical mitigations

To maximize ROI, enable Google Workspace DLP or Microsoft 365 admin controls, use prompt templates, require human verification for sensitive outputs, log queries for audits, and consider private models or on-prem deployments to reduce data leakage.

These steps preserve productivity gains while limiting hallucination and compliance exposure. Measure time saved and error reduction to prove value both internally.

Frequently Asked Questions

You need clear, practical answers when evaluating Google SGE, Microsoft Bing Chat/Copilot, Amazon Kendra, or Elastic App Search. This FAQ focuses on differences, reliability, privacy, cost, and industry fit so you can judge tools like Google SGE and Microsoft Copilot objectively.

Verify SGE outputs by checking surfaced citations, using Google Scholar or PubMed for claims, consulting the Wayback Machine for historical content, and cross-referencing internal logs in Elasticsearch or Kibana.

Pros and Cons

- Pros: Faster research and summarization with Google SGE and Bing Copilot.

- Pros: Enterprise controls and indexing strengths in Amazon Kendra and Elastic App Search.

- Pros: Built-in citations in some SGEs simplify verification.

- Cons: Hallucination risk—verify critical facts with original sources.

- Cons: Privacy and compliance require configuration for GDPR/CCPA; use Google Cloud Search or Microsoft Graph audit features.

- Cons: API and hosting costs (OpenAI, Microsoft, Elasticsearch) can rise with scale.

SGE FAQ

What exactly is an SGE and how does it differ from search engines with AI features?

▼

Will SGEs replace traditional search or just augment it?

▼

How reliable are SGE answers and how can I verify them?

▼

What are the main privacy and compliance considerations?

▼

How can you evaluate cost-effectiveness for your team?

▼

Which industries benefit most from SGE features today?

▼

Conclusion

🎯 Key Takeaways

- → SGE boosts relevance and productivity but can increase API costs and complexity.

- → Pilot with Google SGE or Bing Chat using clear KPIs: CTR, time-to-insight, cost per query.

- → Watch hallucinations, privacy controls, and regulatory changes; favor phased rollouts.

You’ll find The Evolution of AI Search Engines (SGE) delivers measurable gains in relevance and productivity when integrated into workflows. Google SGE and Bing Chat show the strongest generative answers; ChatGPT can augment analysis. Expect trade-offs in cost and engineering effort.

Next steps: run a pilot with a representative query set, define KPIs—CTR, time-to-insight, hallucination rate, cost per query—and use A/B tests versus your current search (Elasticsearch or internal engine). Favor phased rollouts and monitor privacy controls and latency.

Pros

- Higher answer relevance and faster research

- Better conversational UX with Bing Chat and Google SGE

Cons

- Higher API costs and integration complexity

- Risk of hallucinations and regulatory shifts

Final verdict: adopt cautiously—start limited pilot with Google SGE or Bing Chat, keep ChatGPT for synthesis and human review, and iterate by KPI.

TL;DR: Generative search experiences from Google SGE, Microsoft Bing Chat, and OpenAI’s ChatGPT are reshaping search by adding AI‑generated summaries, conversational results, and new verification, privacy, and UX challenges. This review traces the shift from keyword indexing to retrieval‑augmented generation and neural embeddings, compares Google, Bing, and Bard on latency, hallucinations and citation quality, and offers hands‑on pros/cons and practical guidance for integrating SGE into research, content creation, and development workflows.

In conclusion, the transition from traditional indexing to AI-driven intelligence marks a new era in the digital world. While Google SGE and other AI search engines offer incredible speed and generative summaries, users must remain mindful of citation quality and accuracy. As we move further into 2026, the key to academic and professional excellence will be mastering these AI tools while maintaining a critical eye. Stay updated with the latest AI trends and continue exploring how these technologies can simplify your complex workflows. The future of search is here—are you ready for it?

I ‘m Md. Osman Goni > Founder of SearchAIFinder and an AI content specialist. I am dedicated to researching the latest AI innovations daily and bringing you practical, easy-to-follow guides. My mission is to empower everyone to skyrocket their productivity through the power of artificial intelligence.”

💬 We’d Love to Hear From You!

Which of these AI tools are you excited to try first? Let us know in the comments below!